Picture by Writer

# Introduction

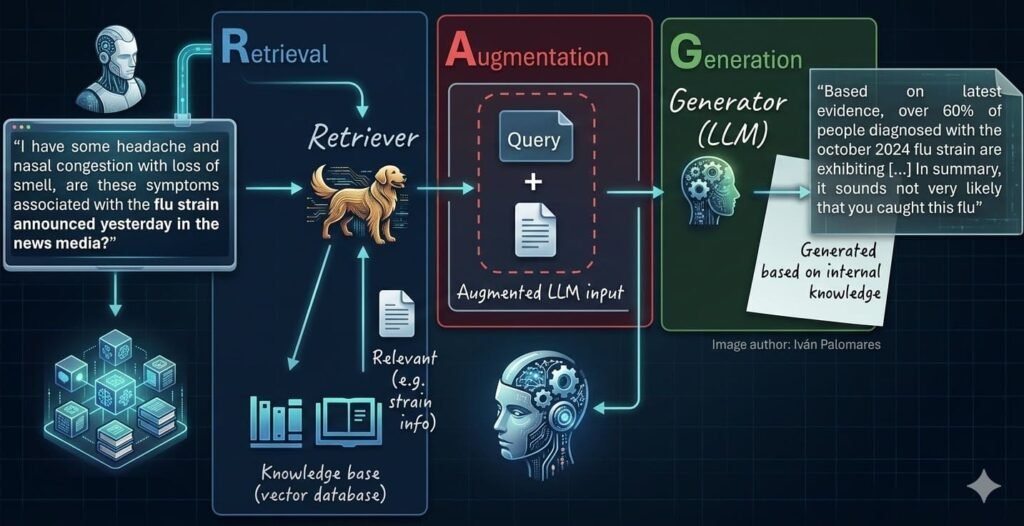

Retrieval-augmented technology (RAG) techniques are, merely put, the pure evolution of standalone massive language fashions (LLMs). RAG addresses a number of key limitations of classical LLMs, like mannequin hallucinations or an absence of up-to-date, related information wanted to generate grounded, fact-based responses to person queries.

In a associated article collection, Understanding RAG, we offered a complete overview of RAG techniques, their traits, sensible issues, and challenges. Now we synthesize a part of these classes and mix them with the most recent traits and strategies to explain seven key steps deemed important to mastering the event of RAG techniques.

These seven steps are associated to totally different phases or parts of a RAG atmosphere, as proven within the numeric labels ([1] to [7]) within the diagram under, which illustrates a classical RAG structure:

7 Steps to Mastering RAG Methods (see numbered labels 1-7 and listing under)

- Choose and clear knowledge sources

- Chunking and splitting

- Embedding/vectorization

- Populate vector databases

- Question vectorization

- Retrieve related context

- Generate a grounded reply

# 1. Deciding on and Cleansing Knowledge Sources

The “rubbish in, rubbish out” precept takes its most significance in RAG. Its worth is instantly proportional to the relevance, high quality, and cleanliness of the supply textual content knowledge it might probably retrieve. To make sure high-quality information bases, establish high-value knowledge silos and periodically audit your bases. Earlier than ingesting uncooked knowledge, carry out an efficient cleansing course of by means of sturdy pipelines that apply essential steps like eradicating personally identifiable data (PII), eliminating duplicates, and addressing different noisy components. It is a steady engineering course of to be utilized each time new knowledge is integrated.

You’ll be able to learn by means of this text to get an summary of information cleansing strategies.

# 2. Chunking and Splitting Paperwork

Many situations of textual content knowledge or paperwork, like literature novels or PhD theses, are too massive to be embedded as a single knowledge occasion or unit. Chunking consists of splitting lengthy texts into smaller components that retain semantic significance and maintain contextual integrity. It requires a cautious strategy: not too many chunks (incurring doable lack of context), however not too few both — outsized chunks have an effect on semantic search afterward!

There are numerous chunking approaches: from these based mostly on character rely to these pushed by logical boundaries like paragraphs or sections. LlamaIndex and LangChain, with their related Python libraries, can actually assist with this activity by implementing extra superior splitting mechanisms.

Chunking can also take into account overlap amongst components of the doc to protect consistency within the retrieval course of. For the sake of illustration, that is what such chunking might appear like over a small, toy-sized textual content:

Chunking paperwork in RAG techniques with overlap | Picture by Writer

In this installment of the RAG collection, you may also study the additional function of doc chunking processes in managing the context measurement of RAG inputs.

# 3. Embedding and Vectorizing Paperwork

As soon as paperwork are chunked, the following step earlier than having them securely saved within the information base is to translate them into “the language of machines”: numbers. That is sometimes executed by changing every textual content right into a vector embedding — a dense, high-dimensional numeric illustration that captures semantic traits of the textual content. Lately, specialised LLMs to do that activity have been constructed: they’re referred to as embedding fashions and embrace well-known open-source choices like Hugging Face’s all-MiniLM-L6-v2.

Study extra about embeddings and their benefits over classical textual content illustration approaches in this text.

# 4. Populating the Vector Database

In contrast to conventional relational databases, vector databases are designed to successfully allow the search course of by means of high-dimensional arrays (embeddings) that symbolize textual content paperwork — a essential stage of RAG techniques for retrieving related paperwork to the person’s question. Each open-source vector shops like FAISS or freemium alternate options like Pinecone exist, and may present wonderful options, thereby bridging the hole between human-readable textual content and math-like vector representations.

This code excerpt is used to separate textual content (see level 2 earlier) and populate a neighborhood, free vector database utilizing LangChain and Chroma — assuming we now have an extended doc to retailer in a file referred to as knowledge_base.txt:

from langchain_community.document_loaders import TextLoader

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langchain_community.embeddings import HuggingFaceEmbeddings

from langchain_community.vectorstores import Chroma

# Load and chunk the information

docs = TextLoader(“knowledge_base.txt”).load()

chunks = RecursiveCharacterTextSplitter(chunk_size=500, chunk_overlap=50).split_documents(docs)

# Create textual content embeddings utilizing a free open-source mannequin and retailer in ChromaDB

embedding_model = HuggingFaceEmbeddings(model_name=”all-MiniLM-L6-v2″)

vector_db = Chroma.from_documents(paperwork=chunks, embedding=embedding_model, persist_directory=”./db”)

print(f”Efficiently saved {len(chunks)} embedded chunks.”)

Learn extra about vector databases right here.

# 5. Vectorizing Queries

Person prompts expressed in pure language usually are not instantly matched to saved doc vectors: they have to be translated too, utilizing the identical embedding mechanism or mannequin (see step 3). In different phrases, a single question vector is constructed and in contrast in opposition to the vectors saved within the information base to retrieve, based mostly on similarity metrics, essentially the most related or comparable paperwork.

Some superior approaches for question vectorization and optimization are defined in this half of the Understanding RAG collection.

# 6. Retrieving Related Context

As soon as your question is vectorized, the RAG system’s retriever performs a similarity-based search to seek out the closest matching vectors (doc chunks). Whereas conventional top-k approaches typically work, superior strategies like fusion retrieval and reranking can be utilized to optimize how retrieved outcomes are processed and built-in as a part of the ultimate, enriched immediate for the LLM.

Take a look at this associated article for extra about these superior mechanisms. Likewise, managing context home windows is one other necessary course of to use when LLM capabilities to deal with very massive inputs are restricted.

# 7. Producing Grounded Solutions

Lastly, the LLM comes into the scene, takes the augmented person’s question with retrieved context, and is instructed to reply the person’s query utilizing that context. In a correctly designed RAG structure, by following the earlier six steps, this normally results in extra correct, defensible responses which will even embrace citations to our personal knowledge used to construct the information base.

At this level, evaluating the standard of the response is significant to measure how the general RAG system behaves, and signaling when the mannequin might have fine-tuning. Analysis frameworks for this finish have been established.

# Conclusion

RAG techniques or architectures have turn into an virtually indispensable facet of LLM-based purposes, and industrial, large-scale ones hardly ever miss them these days. RAG makes LLM purposes extra dependable and knowledge-intensive, they usually assist these fashions generate grounded responses based mostly on proof, typically predicated on privately owned knowledge in organizations.

This text summarizes seven key steps to mastering the method of developing RAG techniques. After you have this elementary information and abilities down, you’ll be in a superb place to develop enhanced LLM purposes that unlock enterprise-grade efficiency, accuracy, and transparency — one thing not doable with well-known fashions used on the Web.

Iván Palomares Carrascosa is a pacesetter, author, speaker, and adviser in AI, machine studying, deep studying & LLMs. He trains and guides others in harnessing AI in the true world.