Transformers energy trendy NLP techniques, changing earlier RNN and LSTM approaches. Their means to course of all phrases in parallel permits environment friendly and scalable language modeling, forming the spine of fashions like GPT and Gemini.

On this article, we break down how Transformers work, ranging from textual content illustration to self-attention, multi-head consideration, and the complete Transformer block, exhibiting how these elements come collectively to generate language successfully.

How transformers energy fashions like GPT, Claude, and Gemini

Trendy AI techniques use transformer architectures for his or her means to deal with large-scale language processing duties. These fashions require giant textual content datasets for coaching as a result of they should study language patterns by way of particular modifications which meet their coaching wants. The GPT fashions (GPT-4, GPT-5) use decoder-only Transformers i.e, a stack of decoder layers with masked self-attention. Claude (Anthropic) and Gemini (Google) additionally use related transformer stacks, which they modify by way of their customized transformations. Google’s Gemma fashions use the transformer design from the “Consideration Is All You Want” paper to create textual content by way of a course of which generates one token at a time.

Half 1: How Textual content Turns into Machine-Readable

Step one towards transformer operation requires textual content conversion into numerical kind for transformer processing. The method begins with tokenization and embeddings which require conversion of phrases into distinct tokens adopted by conversion of these tokens into vector illustration. The system wants positional encodings as a result of they assist the mannequin perceive how phrases are organized in a sentence. On this part we break down every step.

Step 1: Tokenization: Changing Textual content into Tokens

At its core, an LLM can not straight ingest uncooked textual content characters. Neural networks function on numbers, not textual content. The method of tokenization permits the conversion of an entire textual content string into separate components which obtain particular person numeric identifiers.

Why LLMs Can’t Perceive Uncooked Textual content

The mannequin requires numeric enter as a result of uncooked textual content exists as a personality string. We are able to’t create a word-to-index mapping system as a result of language accommodates infinite doable types by way of its numerous tenses and plural types and thru the introduction of latest vocabulary. The whole textual content of uncooked supplies doesn’t comprise the required numerical framework that neural networks want for his or her mathematical computations.For instance, the sentence: Transformers modified pure language processing

This should first be transformed right into a sequence of tokens earlier than the mannequin can course of it.

How Tokenization Works

Tokenization segments textual content into smaller sections which correspond to linguistic elements. The tokens can symbolize three completely different components which embrace: phrases and subwords and characters and punctuation.

For instance:

The mannequin makes use of a novel numerical Id to symbolize every token which it wants for each coaching and inference functions.

Forms of Tokens Utilized in LLMs

Completely different tokenization methods exist relying on the mannequin structure and vocabulary design. The strategies embrace Byte-Pair Encoding (BPE), WordPiece, and Unigram. The strategies preserve widespread phrases as single tokens whereas they divide unusual phrases into important elements.

The phrase “transformers” stays complete whereas “unbelievability” breaks down into “un” “believ” “means“. Subword tokenization permits fashions to course of new or unusual phrases through the use of recognized phrase elements. Tokenizers deal with phrase items as fundamental items and particular tokens (like “) and punctuation marks as distinct items.

Step 2: Token Embeddings: Turning Tokens into Vectors

The mannequin makes use of the acquired tokens to create an embedding vector for every token ID. The token embeddings symbolize phrase which means by way of using dense numeric vectors.

An embedding is a numeric vector illustration of a token. You possibly can consider it as every token having coordinates in a high-dimensional house. The phrase “cat” will map to a vector that exists in 768 dimensions. The mannequin acquires these embeddings by way of its coaching course of. The tokens which have equal meanings produce vectors which present their relationship to at least one one other. The phrases “Whats up” and “Hello” have shut embedding values however “Whats up” and “Goodbye” present a big distance between their respective embeddings.

What’s an Embedding?

The mannequin makes use of the acquired tokens to create an embedding vector for every token ID. The token embeddings symbolize phrase which means by way of using dense numeric vectors.

An embedding is a numeric vector illustration of a token. You possibly can consider it as every token having coordinates in a high-dimensional house. The phrase “cat” will map to a vector that exists in 768 dimensions. The mannequin acquires these embeddings by way of its coaching course of. The tokens which have equal meanings produce vectors which present their relationship to at least one one other. The phrases “Whats up” and “Hello” have shut embedding values however “Whats up” and “Goodbye” present a big distance between their respective embeddings.

Learn extra: A sensible information to phrase embedding techniques

Hello: [0.25, -0.18, 0.91, …], Whats up: [0.27, -0.16, 0.88, …]

Like right here we are able to see that the embeddings of Hello and Whats up are fairly related. And the embeddings of Hello, and GoodBye are fairly distant to one another.

Hello: [0.25, -0.18, 0.91, …], GoodBye: [-0.60, 0.75, -0.20, -0.55]

Semantic Which means in Vector Area

Embeddings seize which means which permits us to evaluate relationships by way of vector similarity measurements. The vectors for “cat” and “canine” present nearer proximity than these for “cat” and “desk” as a result of their semantic relationship is stronger. The mannequin discovers phrase similarity by way of the preliminary stage of its processing. A token’s embedding begins as a fundamental which means which lacks context as a result of it solely exhibits the precise phrase which means. The system first learns fundamental phrase meanings by way of its consideration system which brings in context afterward. The phrase “cat” understands its id as an animal whereas the phrase “run” acknowledges its perform in describing movement.

For instance:

- The phrases king and queen present a sample of showing in shut proximity.

- The 2 fruits apple and banana present a bent to group collectively.

- The phrases automotive and car show comparable spatial distributions within the atmosphere.

- The spatial construction of the system permits coaching fashions to develop understanding of phrase connections.

Why Related Phrases Have Related Vectors

Throughout coaching the mannequin modifies its embedding system to create phrase vector areas which show phrases that happen in matching contexts. This phenomenon happens as a secondary impact of next-word prediction aims. By means of the method of time passage, interchangeable phrases and associated phrases develop similar embeddings which allow the mannequin to make broader predictions. The embedding layer learns to symbolize semantic relationships as a result of it teams synonyms collectively whereas creating separate areas for associated ideas. The assertion explains why the 2 phrases “whats up” and “hello” have related meanings and the Transformers’ embedding methodology efficiently extracts language which means from basic components.

For instance:

The cat sat on the ___ and The canine sat on the ___ .

As a result of cat and canine seem in related contexts, their embeddings transfer nearer in vector house.

Step 3: Positional Encoding: Instructing the Mannequin Phrase Order

A key limitation of the eye techniques is that it requires express sequence info as a result of they can’t independently decide the order of tokens. The transformer processes the enter as a set of phrases till we offer positional info for the embeddings. The mannequin receives phrase order info by way of positional encoding.

Why Transformers Want Positional Info

Transformers execute their computations by processing all tokens concurrently, which differs from RNNs that require sequential processing. The system’s means to course of duties concurrently leads to quick efficiency, however this design selection prevents the system from understanding order of occasions. The Transformer would understand our embeddings as unordered components after we enter them straight. The mannequin will interpret “the cat sat” and “sat cat the” similar when there are not any positional encodings current. The mannequin requires positional info as a result of it wants to grasp phrase order patterns that have an effect on which means.

How Positional Encoding Works

Transformers sometimes add a positional encoding vector to every token embedding. The unique paper used sinusoidal patterns based mostly on token index. All the sequence requires a devoted vector which will get added to every token’s distinctive embedding. The system establishes order by way of this methodology: token #5 all the time receives that place’s vector whereas token #6 will get one other particular vector and so forth. The community receives enter by way of positional vectors that are mixed with embedding vectors earlier than coming into the system. The mannequin’s consideration techniques can acknowledge phrase positions by way of “that is the third phrase” and “seventh phrase” statements.

The primary reply states that community enter turns into disorganized when place encoding will get eliminated since all positional info will get erased. Positional encodings restore that spatial info so the Transformer can distinguish sentences that differ solely by phrase order.

Why Phrase Order Issues in Language

Phrase order in pure language determines the precise which means of sentences. The 2 sentences: “The canine chased the cat” and “The cat chased the canine” show their fundamental distinction by way of their completely different phrase orders. An LLM system must find out about phrase positions as a result of this information permits it to seize all linguistic particulars of a sentence. Consideration makes use of positional encoding to realize the aptitude of processing sequential info. The system permits the mannequin to concentrate on each absolute and relative place info based on its necessities.

Half 2: The Core Concept That Made Transformers Highly effective

The primary discovery which permits transformer know-how to perform is the self-attention mechanism. The mechanism permits tokens to course of a sentence by interacting with one another in actual time.

Self-attention permits each token to look at all different tokens within the sequence on the identical time as an alternative of processing textual content in a linear vogue.

Step 4: Self-Consideration: How Tokens Perceive Context

Self-attention capabilities as the strategy by way of which every token in a sequence acquires information about all different tokens. The primary self-attention layer permits each token to calculate consideration scores for all different tokens within the sequence.

The Core Instinct of Consideration

If you start a sentence, you begin studying it and also you wish to know the connection between the present phrase and all different phrases within the sentence. The system produces its output by way of an consideration mechanism that creates a weighted mixture of all token representations. Every token decides which different phrases it wants to grasp its personal which means.

For instance: The animal didn’t cross the road as a result of it was too drained.

Right here, the phrase “it” most definitely refers to ‘animal’, not ‘road’. Right here comes the self consideration, it permits the mannequin to study these related contextual relationships.

Question, Key, and Worth Defined Intuitively

The self-attention mechanism requires three vectors for every token which embrace the question vector and the important thing vector and the worth vector. The system generates these three elements from the token’s embedding by way of realized weight matrices. The question vector capabilities as a search mechanism which seeks explicit info whereas the important thing vector gives details about what the phrase brings to different phrases and the worth vector exhibits the precise which means of the phrase.

- Question (Q): The token makes use of this aspect to seek for details about its surrounding context.

- Key (Okay): The system identifies tokens which comprise probably helpful information for the present activity.

- Worth (V): The system makes use of this aspect to hyperlink particular info for every token within the system.

How Tokens Determine What to Focus On

The method of self-attention generates a matrix that shows consideration scores for all doable token pairs. We receive the question rating for every token by calculating its dot product with all different tokens’ keys after which making use of softmax to create weight distributions. The system produces a likelihood distribution that signifies which tokens within the sequence have the best significance.

The token makes use of its worth vectors from the highest tokens to vary its personal vector. A phrase reminiscent of “it” will exhibit robust consideration to the nouns it references inside a sentence. Consideration scores function as normalized mathematical dot merchandise that use Q and Okay values which have undergone softmax transformation. The brand new illustration of every token outcomes from combining completely different tokens based mostly on their contextual significance.

Why Consideration Solved Lengthy-Context Issues

Earlier than the event of Transformers RNNs and CNNs confronted challenges with efficient long-range context dealing with. The introduction of Consideration allowed each token to entry all different tokens with out regard to their distance. Self-attention permits simultaneous processing of full sequences which permits it to detect connections between phrases situated at first and finish of prolonged textual content. The flexibility of attention-based fashions to understand all contextual info permits them to carry out properly in duties that require intensive context understanding reminiscent of translation and summarization.

Step 5: Multi-Head Consideration: Studying A number of Relationships

A number of consideration heads allow the system to execute a number of consideration processes as a result of every head makes use of its separate Q/Okay/V projections to carry out its duties. The mannequin can seize simultaneous a number of meanings by way of this function.

Why One Consideration Mechanism Is Not Sufficient

The mannequin should use all context from the textual content by way of a single consideration head which creates one rating system. Language displays numerous patterns by way of its completely different components which embrace syntax and semantics and named entities and coreference. A single head may seize one sample (say, syntactic alignment) however miss different patterns.

Subsequently, multi-head consideration makes use of separate “heads” to course of completely different patterns based on their necessities. Every head develops its personal set of queries and keys and values which permits one head to check phrase order whereas one other head research semantic similarity and a 3rd head research particular phrases. The completely different components create a number of methods to grasp the state of affairs.

How A number of Consideration Heads Work

The multi-head layer initiatives every token into h units of Q/Okay/V vectors, which embrace one set of vectors for every head. Self-attention calculation happens by way of every head which leads to h distinct context vectors for each token. The method requires us to hyperlink info by way of both concatenation or addition which we then remodel utilizing linear mapping. The consequence creates a number of consideration channels which improve every token’s embedding. The abstract states that multi-head consideration makes use of numerous consideration heads to establish completely different relationships which exist throughout the identical sequence.

This mixed system learns further info as a result of every head learns its personal particular subspace which results in higher outcomes than any single head may obtain. One head may uncover that “financial institution” connects with “cash” whereas one other head interprets “financial institution” as a riverbank. The mixed output creates a extra detailed token illustration of the token. The vast majority of superior fashions implement 16 or increased heads for every layer as a result of this configuration permits them to realize optimum sample recognition.

Half 3: The Transformer Block (The Engine of LLMs)

The mixture of consideration mechanisms with fundamental feed-forward computations is dealt with by way of Transformer blocks which rely upon residual connections along with layer normalization as their important stabilizing mechanisms. All the system is constructed by way of the mixture of a number of blocks which show this operation. We are going to analyze a block on this part earlier than we present the rationale LLMs require a number of layers.

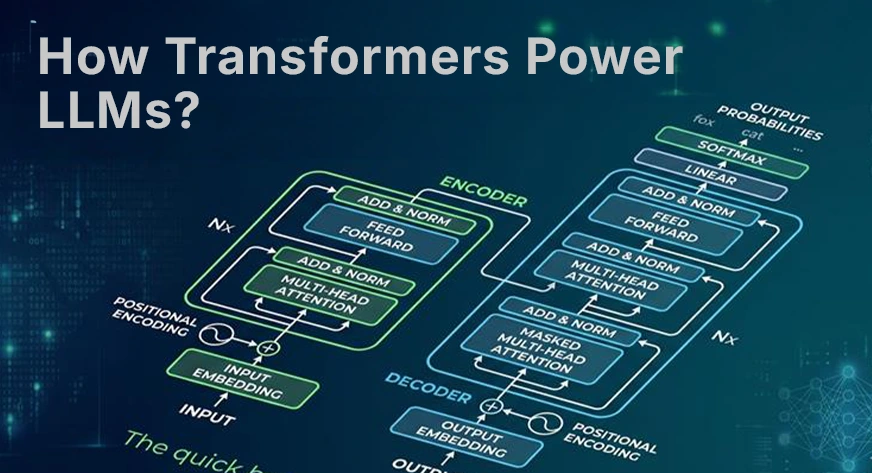

Step 6: The Transformer Decoder Block Structure

The Transformer decoder block which operates in GPT-style fashions accommodates two elements: a masked self-attention layer, adopted by a position-wise feed-forward neural community. The sublayer accommodates two elements: a “skip” connection which makes use of residual connections and a layer normalization perform. The flowchart exhibits how the block operates.

Self-Consideration Layer

The block’s first main sublayer is masked self-attention. The time period “masked” signifies that every token can solely attend to previous tokens as a result of this restriction preserves autoregressive era. The layer applies multi-head self-attention to each token based mostly on the strategy which has been defined beforehand. The system makes use of prior tokens to acquire further contextual info. The system makes use of the masked variant for era functions whereas it might use plain self-attention for encoders reminiscent of BERT.

Feed-Ahead Neural Community (FFN)

Every token vector goes by way of two separate processes after consideration has completed which entails utilizing a common feed-forward community to course of all areas. The system consists of a fundamental two-layer perceptron which accommodates one linear layer for dimension enlargement, a GeLU or ReLU nonlinearity, and one other linear layer for dimension discount. The position-wise feed-forward community permits the mannequin to execute extra intensive modifications for every token. It introduces nonlinearity which permits the block to carry out calculations that exceed the linear consideration mixture. The system processes all tokens concurrently as a result of the feed-forward community operates on every token individually.

Residual Connections

The residual connection exists in each sublayer as its basic requirement. We add the layer’s enter again to its output. The eye sublayer makes use of the next operation:

x = LayerNorm(x + Consideration(x)); equally for the FFN: x = LayerNorm(x + FFN(x)).

The skip connections allow easy gradient circulation all through the community which protects towards vanishing gradients in deep community architectures. The community permits folks to skip new sublayer modifications when their affect on the unique sign stays minimal. Residuals allow coaching of a number of layers as a result of they preserve optimization stability.

Layer Normalization

The system applies Layer Normalization after each addition operation. The method of LayerNorm first standardizes every token’s vector to have a imply of 0 and a variance of 1. The system maintains activation sizes inside coaching limits through the use of this methodology. The coaching course of receives stability from the mixture of skip connections and the normalization element which types the Add & Norm block. So, these components stop the prevalence of vanishing gradients whereas they convey stability to the coaching course of. The deep transformer requires these elements as a result of in any other case coaching would change into troublesome or the system would probably diverge.

Step 7: Stacking Transformer Layers

Trendy LLMs comprise a number of transformer layers which they prepare in a sequence. Every layer enhances the output that the previous layer produced. They stack many blocks which often include dozens or better than that. The system used 12 layers in GPT-2 small whereas GPT-3 required 96 layers and present fashions want even increased portions.

Why LLMs Use Dozens or A whole lot of Layers

The reason being easy; extra layers give the mannequin extra capability to study advanced options. Every layer transforms the illustration which develops from basic embeddings till it reaches superior high-level ideas. The preliminary layers of a system establish fundamental grammar and instant patterns whereas the later layers develop comprehension of advanced meanings and information in regards to the world. The variety of layers serves as the principle distinction between GPT-3.5 and GPT-4 fashions as a result of each techniques require completely different portions of layers and parameters.

How Representations Enhance Throughout Layers

Every layer of the system improves the token embeddings by way of further contextual info. After the primary layer, every phrase vector contains info from associated phrases in its consideration vary. The final layer transforms the vector into a posh illustration that conveys full sentence which means. The system permits tokens to develop from fundamental phrase meanings into superior deep semantic interpretations.

From Phrases to Deep Semantic Understanding

A token loses its unique phrase embedding after it completes processing by way of all system layers. The system now possesses a refined comprehension of the encompassing context. The phrase “financial institution” makes use of an enriched vector which strikes towards “finance” when “mortgage” and “curiosity” seem first whereas it strikes towards “river” when “water” and “fishing” happen first.

Subsequently, the mannequin makes use of a number of transformer layers as a way to progressively make clear phrase meanings and remedy reference issues whereas conveying detailed info. The mannequin develops deeper understanding by way of every successive layer which permits it to provide textual content that maintains coherence and understands context.

Half 4: How LLMs Really Generate Textual content

In any case this encoding and context-building, how does an LLM produce phrases? LLMs function as autoregressive fashions since they create output by producing one token at a time by way of their prediction mechanism which depends upon beforehand generated tokens. Right here we clarify the ultimate steps: computing chances and sampling a token.

Step 8: Autoregressive Textual content Technology

The mannequin makes use of autoregressive era to make predictions in regards to the upcoming token by way of its steady ahead cross operations.

Predicting the Subsequent Token

The LLM begins its processing when it receives a immediate which consists of a sequence of tokens. The transformer community processes the immediate tokens by way of its transformer layers. The ultimate output consists of a vector which represents every place. The era course of makes use of the final token’s vector along with the end-of-prompt token vector. The vector enters the ultimate linear layer which individuals confer with because the unembedding layer that creates a rating logit for each token within the vocabulary. The uncooked scores present the likelihood for every token to change into the succeeding token.

The Function of SoftMax and Possibilities

The mannequin generates logits which perform as unnormalized rating values that describe each doable token. The mannequin makes use of the softmax perform to remodel these logits right into a likelihood distribution which requires the perform to exponentiate all logits earlier than it normalizes them to a complete sum of 1.

The softmax perform operates by giving better likelihood weight to increased logit values whereas it decreases all different values in the direction of zero. The system gives a likelihood worth which applies to each potential subsequent phrase. Trendy fashions generate numerous textual content as a result of they use sampling strategies to create managed randomness from the likelihood distribution as an alternative of all the time selecting the most definitely phrase by way of grasping decoding which leads to repetitive and uninteresting content material.

Sampling Methods (Temperature, High-Okay, High-P)

To show chances right into a concrete selection, LLMs use sampling strategies:

- Temperature(T): We divide all logits by temperature T earlier than making use of the softmax perform. The distribution turns into narrower when T worth decreases beneath 1 as a result of the distribution peaks to an excessive level which makes the mannequin choose safer and extra predictable phrases. The distribution turns into broader at T values above 1 as a result of it makes unusual phrases extra doable to look whereas creating output that exhibits extra ingenious outcomes.

- High-Okay sampling: We preserve the highest Okay token decisions from our likelihood rating after we kind all accessible tokens. With Okay set to 50, the system evaluates solely the 50 most possible tokens whereas all different tokens obtain zero likelihood. The Okay tokens have their chances renormalized earlier than we select one token to pattern.

- High-P (nucleus) sampling: As a substitute of a hard and fast Okay, we take the smallest set of tokens whose complete likelihood mass exceeds a threshold p. If p equals 0.95, we retain the highest tokens till their cumulative likelihood reaches or exceeds 95%. The system considers solely “Paris” plus one or two further choices in conditions which have excessive confidence. The capital of France is”), solely “Paris” (perhaps plus one or two) is taken into account. The inventive atmosphere permits a number of tokens to be a part of the method. High-P adapts to the state of affairs and is broadly used (it’s the default in lots of APIs).

The temperature adjustment and top-Okay setting and top-P setting management our means to generate each random and decided outputs. The alternatives you choose on this part decide whether or not LLM outputs will present actual outcomes or extra inventive outcomes as a result of completely different LLM companies allow you to regulate these settings.

Why Transformers Scale So Properly

There are two major the reason why transformers scale so properly:

- Parallel Processing: Transformers exchange sequential recurrence with matrix multiplications and a focus, permitting a number of tokens to be processed directly. Not like RNNs, they deal with complete sentences in parallel on GPUs, making coaching and inference a lot quicker.

- Dealing with Lengthy Context: Transformers use consideration to attach phrases straight, letting them seize long-range context much better than RNNs or CNNs. They’ll deal with dependencies throughout 1000’s of tokens, enabling LLMs to course of complete paperwork or conversations.

Conclusion

Transformers have basically reshaped pure language processing by enabling fashions to course of complete textual content sequences and seize advanced relationships between phrases. From tokenization and embeddings to positional encoding and a focus mechanisms, every element contributes to constructing a wealthy understanding of language.

By means of transformer blocks, these representations are refined utilizing consideration layers, feed-forward networks, residual connections, and normalization. This pipeline permits LLMs to generate coherent textual content token by token, establishing transformers because the core basis of contemporary AI techniques reminiscent of GPT, Claude, and Gemini.

Regularly Requested Questions

Q1. How do transformers assist LLMs perceive language?

A. Transformers use self-attention and embeddings to seize context and relationships between phrases, enabling fashions to course of complete sequences and perceive which means effectively.

Q2. Why are transformers higher than RNNs and LSTMs?

A. Transformers course of all tokens in parallel and deal with long-range dependencies successfully, making them quicker and extra scalable than sequential fashions like RNNs and LSTMs.

Q3. How do LLMs generate textual content utilizing transformers?

A. LLMs predict the subsequent token utilizing chances from softmax and sampling strategies, producing textual content step-by-step based mostly on realized language patterns.

Whats up! I am Vipin, a passionate information science and machine studying fanatic with a powerful basis in information evaluation, machine studying algorithms, and programming. I’ve hands-on expertise in constructing fashions, managing messy information, and fixing real-world issues. My aim is to use data-driven insights to create sensible options that drive outcomes. I am desirous to contribute my expertise in a collaborative atmosphere whereas persevering with to study and develop within the fields of Information Science, Machine Studying, and NLP.

Login to proceed studying and luxuriate in expert-curated content material.

Hold Studying for Free