No matter my Linux distribution or setup, there comes some extent after I begin experiencing some slowdown or lag in day by day actions. Generally, it will get difficult as a result of after I use high, CPU and reminiscence utilization look regular.

I lately tried iotop, and in actual time, it revealed apps that had been studying or writing to my disk, a facet I sometimes overlook when confronted with efficiency points. iotop is now considered one of my go-to instructions for troubleshooting Linux.

Set up iotop in seconds

Easy to arrange, however there’s one element most guides miss

Afam Onyimadu / MUO

To put in iotop on Debian-based distributions equivalent to Ubuntu or Mint, run:

sudo apt set up iotop

On Fedora run sudo dnf set up iotop; on Arch run sudo pacman -S iotop .

There are two variants of this device: the common iotop (Python-based) and iotop-c (written in C). iotop-c is a more moderen model and turns into the automated default if you set up iotop on some fashionable Ubuntu and Debian methods. Although it is nearly inconceivable to inform them other than the interface, iotop-c is smoother in day by day use. On distros that provide iotop-c, it is normally the higher selection.

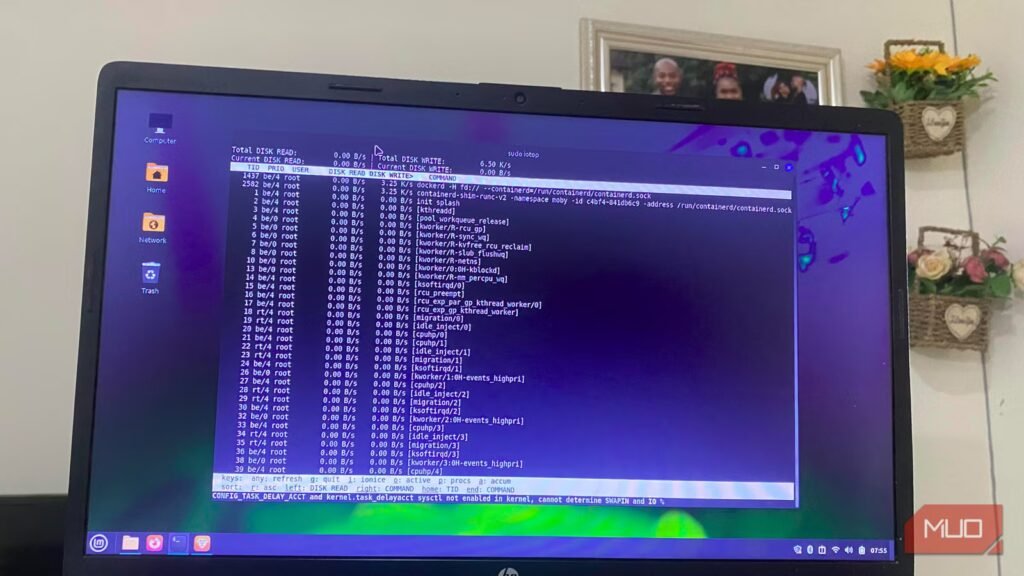

Perceive what iotop is admittedly telling you

The few numbers that truly reveal disk stress

Your complete iotop interface is overwhelming, however when you acknowledge that a lot of it could be irrelevant, it turns into simpler to interpret. I sometimes seek the advice of 4 columns for analysis:

- DISK READ: Exhibits bytes learn per second

- DISK WRITE: Reveals bytes written per second

- IO%: % of time the method/thread spent doing I/O (waits + swapin)

- COMMAND: Exhibits the method title

I usually skip the highest part as a result of viewing the full disk exercise is not very useful if it is advisable to observe down the trigger. I get higher insights trying by means of the checklist beneath, and essentially the most consequential flags are -o and -a. With -o, I get a extra streamlined checklist together with solely processes at the moment executing I/O. -a takes me to the gathered mode the place I see totals of bytes learn or written since iotop began.

Most of my diagnoses occur with the -o flag. -a is a final resort if I really feel there may be an intermittent incidence of an issue, occasional stuttering, transient lag whereas opening recordsdata, or after I don’t discover something on iotop’s dwell view.

arch

How I exploit iotop to catch the precise course of

An actual workflow that turns disk exercise into a transparent reply

Afam Onyimadu / MUO

I spotted I wanted iotop when my system turned gradual, and the common system screens that I exploit would not catch the perpetrator. After putting in it, I ran the command: sudo iotop -o -d 2Within a number of seconds one course of settled on the high. I counsel slowing the refresh price in order that it is simpler to catch patterns (that is what -d 2 within the command does). Once I noticed this course of, I noticed the continual disk writes that occurred by means of refresh cycles. It was the length that stood out as a result of fast spikes are regular.

My browser had a course of continuously writing megabytes to my disk, and with this statement, I might rule out different attainable culprits. On different events, I’ve been capable of catch totally different processes inflicting lengthy spikes:

- A backup job (rsync) writing steadily

- A bundle supervisor pulling updates

- A logging service writing greater than anticipated

The rule of thumb is to analyze when you discover a course of stays on the high, producing I/O for a number of refresh cycles. iotop removes any guesswork as a result of it reveals you each exercise and a precise title.

Associated

I switched to Hyprland and now I get why Linux customers are obsessive about it

Whereas I will hold it on my Waylands, I will chorus on my Minty day by day driver. For now.

What to do as soon as you discover the perpetrator

Flip visibility into motion with out breaking something

Afam Onyimadu / MUO

I do not instantly kill a course of that’s writing giant quantities to disk. In reality, the precise step I take will depend on what the method is. Adjusting an software’s settings or closing it fully is affordable when the perpetrator is a consumer software. You can too examine what’s triggering that motion. Within the case of a browser, there could also be an extension inflicting the spikes or aggressive caching.

Nevertheless, when it is a course of I am not conversant in, I do not kill it straightaway. I want to do a Google search to be taught extra about it and determine if it is protected to kill.

Additionally, not all processes you see on the high are an indication of unpredictable conduct. Kernel threads like kworker and jbd2 could be regular—jbd2 is tied to ext4 journaling and could also be excessive throughout heavy writes; kworker is a basic kernel thread that may seem for a lot of causes. To examine a course of, run lsof -p to see the recordsdata it accesses. Combining this info with iotop, helps me know the triggers.

Instruments that complement iotop

I do not use iotop in isolation. It reveals me the method inflicting heavy disk I/O. Nevertheless, for context, I mix it with another instruments:

Device

What it reveals

When to make use of it

iostat

Disk/gadget stats

Examine total disk load

lsof

Open recordsdata per course of

See what’s being accessed

htop

CPU utilization + iowait

Get system-wide context

Through the use of iotop with these instruments, I can hint the precise recordsdata concerned in excessive disk reads and writes. For many points, troubleshooting by no means has to go any deeper. iotop is now one of the crucial ridiculously helpful instructions I run on my terminal.