Understanding audio has all the time been the multimodal frontier that lags behind imaginative and prescient. Whereas image-language fashions have quickly scaled towards real-world deployment, constructing open fashions that robustly motive over speech, environmental sounds, and music — particularly at size — has remained fairly onerous. NVIDIA and the College of Maryland researchers are actually taking a direct swing at that hole.

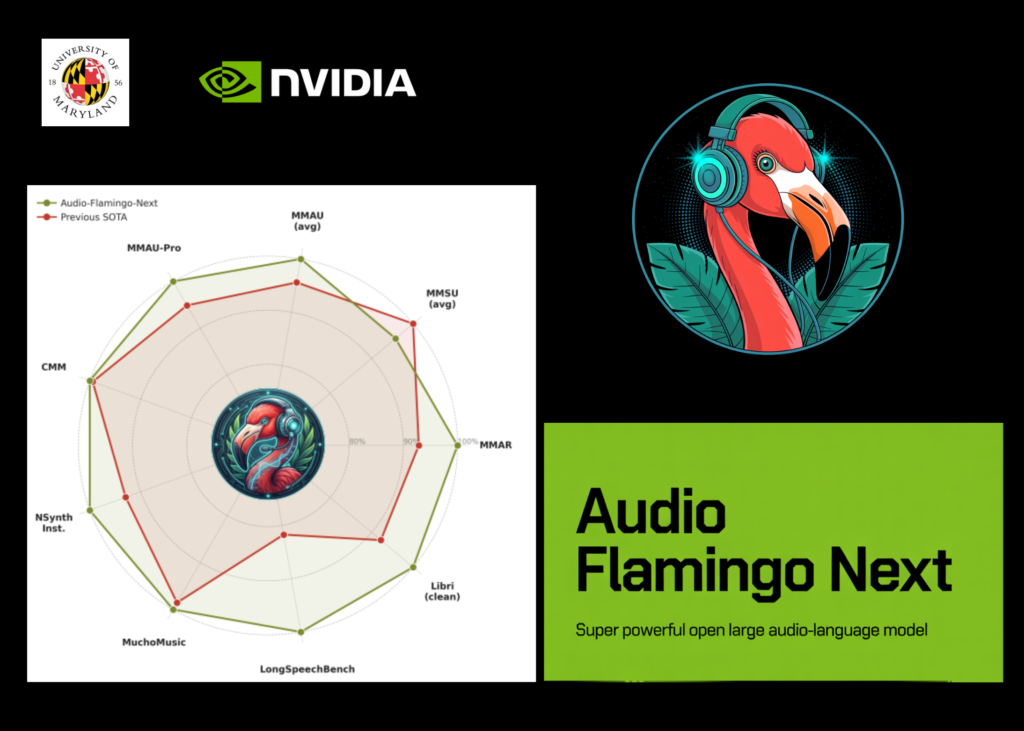

The analysis workforce have launched Audio Flamingo Subsequent (AF-Subsequent), probably the most succesful mannequin within the Audio Flamingo collection and a totally open Massive Audio-Language Mannequin (LALM) educated on internet-scale audio knowledge.

Audio Flamingo Subsequent (AF-Subsequent) is available in three specialised variants for various use circumstances. The discharge contains AF-Subsequent-Instruct for normal query answering, AF-Subsequent-Assume for superior multi-step reasoning, and AF-Subsequent-Captioner for detailed audio captioning.

What’s a Massive Audio-Language Mannequin (LALM)?

A Massive Audio-Language Mannequin (LALM) pairs an audio encoder with a decoder-only language mannequin to allow query answering, captioning, transcription, and reasoning immediately over audio inputs. Consider it because the audio equal of a vision-language mannequin like LLaVA or GPT-4V, however designed to deal with speech, environmental sounds, and music concurrently — inside a single unified mannequin.

https://arxiv.org/pdf/2604.10905

The Structure: 4 Elements Working in a Pipeline

AF-Subsequent is constructed round 4 most important parts: First is the AF-Whisper audio encoder, a customized Whisper-based encoder additional pre-trained on a bigger and extra numerous corpus, together with multilingual speech and multi-talker ASR knowledge. Given an audio enter, the mannequin resamples it to 16 kHz mono and converts the waveform right into a 128-channel log mel-spectrogram utilizing a 25 ms window and 10 ms hop measurement. The spectrogram is processed in non-overlapping 30-second chunks by means of AF-Whisper, which outputs options at 50 Hz, after which a stride-2 pooling layer is utilized. The hidden dimension is 1280.

Second is the audio adaptor, a 2-layer MLP that maps AF-Whisper’s audio representations into the language mannequin’s embedding house. Third is the LLM spine: Qwen-2.5-7B, a decoder-only causal mannequin with 7B parameters, 36 transformer layers, and 16 consideration heads, with context size prolonged from 32k to 128k tokens by means of further long-context coaching.

A delicate however necessary architectural element is Rotary Time Embeddings (RoTE). Commonplace positional encodings in transformers index a token by its discrete sequence place i. RoTE replaces this: as an alternative of the usual RoPE rotation angle θ ← −i · 2π, RoTE makes use of θ ← −τi · 2π, the place τi is every token’s absolute timestamp. For audio tokens produced at a hard and fast 40 ms stride, discrete time positions are interpolated earlier than being fed into the RoTE module. This yields positional representations grounded in precise time relatively than sequence order — a core design alternative enabling the mannequin’s temporal reasoning, notably for lengthy audio. Lastly, a streaming TTS module allows voice-to-voice interplay.

Temporal Audio Chain-of-Thought: The Key Reasoning Recipe

Chain-of-Thought (CoT) prompting has improved reasoning throughout textual content and imaginative and prescient fashions, however prior audio CoT work confirmed solely small features as a result of coaching datasets have been restricted to quick clips with easy questions. AF-Subsequent addresses this with Temporal Audio Chain-of-Thought, the place the mannequin explicitly anchors every intermediate reasoning step to a timestamp within the audio earlier than producing a solution, encouraging trustworthy proof aggregation and lowering hallucination over lengthy recordings.

To coach this functionality, the analysis workforce created AF-Assume-Time, a dataset of query–reply–thinking-chain triplets curated from difficult audio sources together with trailers, film recaps, thriller tales, and long-form multi-party conversations. AF-Assume-Time consists of roughly 43K coaching samples, with a median of 446.3 phrases per pondering chain.

Coaching at Scale: 1 Million Hours, 4 Phases

The ultimate coaching dataset contains roughly 108 million samples and roughly 1 million hours of audio, drawn from each current publicly launched datasets and uncooked audio collected from the open web and subsequently labeled synthetically. New knowledge classes launched embody over 200K lengthy movies spanning 5 to half-hour for long-form captioning and QA, multi-talker speech understanding knowledge masking speaker identification, interruption identification, and goal speaker ASR, roughly 1 million samples for multi-audio reasoning throughout a number of simultaneous audio inputs, and roughly 386K security and instruction-following samples.

Coaching follows a four-stage curriculum, every with distinct knowledge mixtures and context lengths. Pre-training has two sub-stages: Stage 1 trains solely the audio adaptor whereas maintaining each AF-Whisper and the LLM frozen (max audio 30 seconds, 8K token context); Stage 2 moreover fine-tunes the audio encoder whereas nonetheless maintaining the LLM frozen (max audio 1 minute, 8K token context). Mid-training additionally has two sub-stages: Stage 1 performs full fine-tuning of your complete mannequin, including AudioSkills-XL and newly curated knowledge (max audio 10 minutes, 24K token context); Stage 2 introduces long-audio captioning and QA, down-sampling the Stage 1 combination to half its unique mix weights whereas increasing context to 128K tokens and audio to half-hour. The mannequin ensuing from mid-training is particularly launched as AF-Subsequent-Captioner. Submit-training applies GRPO-based reinforcement studying specializing in multi-turn chat, security, instruction following, and chosen skill-specific datasets, producing AF-Subsequent-Instruct. Lastly, CoT-training begins from AF-Subsequent-Instruct, applies SFT on AF-Assume-Time, then GRPO utilizing the post-training knowledge combination, producing AF-Subsequent-Assume.

One notable contribution from the analysis workforce is hybrid sequence parallelism, which makes 128K-context coaching possible on lengthy audio. With out it, audio token growth blows previous normal context home windows and the quadratic reminiscence price of self-attention turns into infeasible. The answer combines Ulysses consideration — which makes use of all-to-all collectives to distribute sequence and head dimensions inside nodes the place high-bandwidth interconnects can be found — with Ring consideration, which circulates key-value blocks throughout nodes through point-to-point transfers. Ulysses handles intra-node communication effectively; Ring scales throughout nodes.

https://arxiv.org/pdf/2604.10905

Benchmark Outcomes: Sturdy Throughout the Board

On MMAU-v05.15.25, probably the most extensively used audio reasoning benchmark, AF-Subsequent-Instruct achieves a median accuracy of 74.20 vs. Audio Flamingo 3’s 72.42, with AF-Subsequent-Assume reaching 75.01 and AF-Subsequent-Captioner pushing to 75.76 — with features throughout all three subcategories: sound (79.87), music (75.3), and speech (72.13). On the tougher MMAU-Professional benchmark, AF-Subsequent-Assume (58.7) surpasses the closed-source Gemini-2.5-Professional (57.4).

Music understanding sees notably robust features. On Medley-Solos-DB instrument recognition, AF-Subsequent reaches 92.13 vs. Audio Flamingo 2’s 85.80. On SongCaps music captioning, GPT5 protection and correctness scores leap from 6.7 and 6.2 (AF3) to eight.8 and eight.9 respectively.

Lengthy-audio understanding is the place AF-Subsequent most clearly separates itself. On LongAudioBench, AF-Subsequent-Instruct achieves 73.9, outperforming each Audio Flamingo 3 (68.6) and the closed-source Gemini 2.5 Professional (60.4). On the speech-inclusive variant (+Speech), AF-Subsequent reaches 81.2 vs. Gemini 2.5 Professional’s 66.2. On ASR, AF-Subsequent-Instruct units new lows amongst LALMs with a Phrase Error Price of 1.54 on LibriSpeech test-clean and a couple of.76 on test-other. On VoiceBench, AF-Subsequent-Instruct achieves the very best scores on AlpacaEval (4.43), CommonEval (3.96), and OpenBookQA (80.9), surpassing Audio Flamingo 3 by over 14 factors on OpenBookQA. On CoVoST2 speech translation, AF-Subsequent reveals a very notable 12-point enchancment over Phi-4-mm on Arabic EN→X translation (21.9 vs. 9.9).

https://arxiv.org/pdf/2604.10905

Key Takeaways

Listed below are 5 key takeaways:

- A Absolutely Open Audio-Language Mannequin at Web Scale: AF-Subsequent is taken into account the primary LALM to scale audio understanding to internet-scale knowledge — roughly 108 million samples and 1 million hours of audio.

- Temporal Audio Chain-of-Thought Solves Lengthy-Audio Reasoning: Fairly than reasoning like prior CoT approaches, AF-Subsequent explicitly anchors every intermediate reasoning step to a timestamp within the audio earlier than producing a solution. This makes the mannequin considerably extra trustworthy and interpretable on lengthy recordings as much as half-hour — an issue prior fashions largely sidestepped.

- Three Specialised Variants for Totally different Use Circumstances: The discharge contains AF-Subsequent-Instruct for normal query answering, AF-Subsequent-Assume for superior multi-step reasoning, and AF-Subsequent-Captioner for detailed audio captioning — permitting practitioners to pick out the best mannequin based mostly on their activity relatively than utilizing a one-size-fits-all checkpoint.

- Beats Closed Fashions on Lengthy Audio Regardless of Being Smaller On LongAudioBench, AF-Subsequent-Instruct scores 73.9 — outperforming the closed-source Gemini 2.5 Professional (60.4) and Audio Flamingo 3 (68.6). On the tougher speech-inclusive variant, the hole widens additional, with AF-Subsequent reaching 81.2 vs. Gemini 2.5 Professional’s 66.2.

Try the Paper, Challenge Web page and Mannequin Weights. Additionally, be at liberty to comply with us on Twitter and don’t neglect to hitch our 130k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you may be a part of us on telegram as effectively.

Have to companion with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Join with us