I by no means thought it will be so difficult to run an area LLM on Home windows. Even when it appeared high-quality, I later realized that the occasion was operating solely on my CPU. Configuring drivers, setting variables, and finally establishing WSL2 after pulling an Ollama mannequin felt like a separate venture. And after that, I discovered it very exhausting to take care of the stack.

I used to be pleasantly stunned once I tried it on Linux. The method was extra direct, simpler, and actually, I used to be now not anticipating issues to interrupt. It felt just like once I turned my Linux terminal into an area AI assistant.

The setup that ought to have taken ten minutes

Why Ollama on Home windows retains pulling you again in

The setup course of for Ollama on Home windows could seem easy, however in actuality, it goes far past downloading and operating the installer. On the primary run, you might be satisfied that the whole lot is ideal since you’ll get textual content and responses. Nonetheless, checking with the command: ollama ps, reveals an idle GPU. It’s because it is operating on the CPU, and a mere 3 to five tokens per second is considerably slower than it needs to be.

Ollama’s default silent fallback is widespread and onerous to identify as a result of it emits no error. This habits is feasible on different OSes if the VRAM out there is simply too small for the mannequin. Nonetheless, on Home windows, a number of different components, akin to driver/installer race circumstances, setting variable propagation, and competing useful resource utilization, can set off it.

Even when nothing appears misplaced, it may well nonetheless fail; in these instances, you might flip to WSL2. I attempted Open WebUI through Docker, which makes Ollama really feel like an area ChatGPT regardless of being a browser-based service. Nonetheless, Docker runs most reliably on Home windows from WSL2. It is a Linux setting operating inside Home windows, and you need to configure it rigorously to keep away from any issues.

In fact, you’ll lastly get it performed proper. The little drawback, nevertheless, is that this course of by no means feels prefer it’s actually completed. It is a cycle of fixing one factor, then one other, then one more. You’ll be able to by no means predict how lengthy it would take to get a working setup; it is likely to be 5 minutes or 5 hours.

Associated

5 Linux terminal instructions that repair most of my system issues

Important Linux troubleshooting instructions each consumer ought to know.

What the Linux set up truly appeared like

One command, GPU energetic, and nothing left to configure

Putting in an area LLM on Linux, compared, was uneventful. I went from one set up command to mannequin obtain, GPU detected, and that was it. After I generated my first response, I hung round simply in case there was one thing to repair; there wasn’t.

This isn’t a query of which OS is healthier. It was merely the truth that the set up script for Ollama was first written for Linux and labored nearly flawlessly, from putting in the binaries and registering Ollama as a background service that begins with the system, to robotically detecting the GPU {hardware}. None of this requires a bridging layer or setting translation. So long as all drivers are in place earlier than the set up, the entire course of takes care of itself.

If GPU detection fails since you did the set up earlier than the drivers had been confirmed, merely reinstall Ollama to repair it.

From my expertise, right here is how the method compares in each OSes:

Step

Home windows

Linux

Set up Ollama

.exe installer (GPU use requires separate verification)

One command (GPU detected robotically)

GPU truly used on first run

Usually falls again to CPU silently

Used instantly if drivers are in place

Open WebUI setup

Wants WSL2 put in and Docker configured inside it

Single Docker command

AMD GPU acceleration

Experimental (ROCm v6.1+, frequent CPU fallback reported)

Full assist through ROCm v7

Ollama runs at startup

Requires handbook configuration

Computerized through systemd

Home windows is supported — Linux is the place they had been written

When you keep within the ecosystem lengthy sufficient, you uncover that Ollama, Open WebUI, and Docker weren’t constructed for Home windows, nor was the Python integration constructed round them. Home windows assist is laid on high of them, and it makes a major distinction.

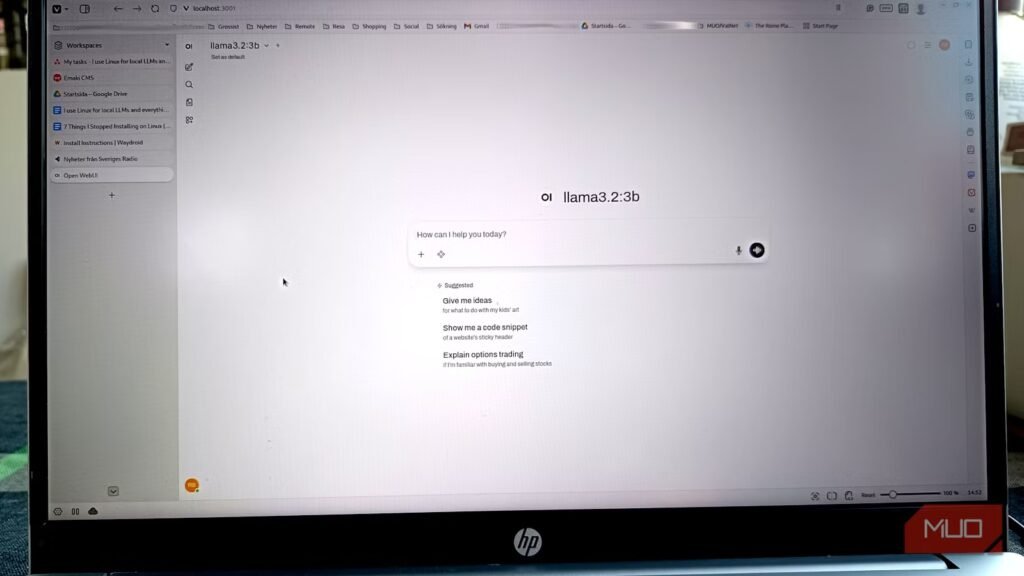

The very best instance is Open WebUI, which is a single Docker command on Linux. This command will immediately begin the container that connects to the native occasion of Ollama when you open a browser. On Home windows, you might be configuring all these parts. It really works, however extra usually it does not in your first try, which typically results in a protracted debugging course of.

These issues are much more sophisticated for AMD GPU customers. There’s full Linux assist for ROCm model 7, AMD’s compute platform for GPU inference. On Home windows, official assist stalls at model 6.1+ and stays experimental. Consequently, many Home windows customers of AMD GPUs nonetheless run Ollama on the CPU, no matter their {hardware} capabilities. The underlying GPU stack simply is not out there.

Think about using LM Studio as a separate device from Ollama. It is GUI-first, runs cleanly on Home windows and Linux, and allows you to run fashions with no terminal. Nonetheless, it solely works if you wish to chat with an area LLM with out doing rather more.

The time you save is not upfront

Whereas I discovered the preliminary set up very fluid, I got here to understand how native LLMs work on Linux extra with every subsequent use of my setup. The reason being that it is not a one-time set up that you simply maintain utilizing endlessly. You retain making an attempt newer fashions as they turn into out there. You’re consistently making an attempt smaller, quantized variations as a mannequin turns into too gradual.

These all require system-level modifications. The preliminary issues on Home windows can recur as you make modifications, and can solely make your expertise with native LLMs very disagreeable.

If you’re trying to attempt Linux only for the sake of an area LLM, I might advise you to make use of Linux Mint. It is most likely the perfect distro for anybody coming from the Home windows ecosystem.

OS

Linux

Minimal CPU Specs

64-bit Single-core

Minimal RAM Specs

1.5 GB

Linux Mint is a well-liked, free, and open-source working system for desktops and laptops. It’s user-friendly, steady, and practical out of the field.