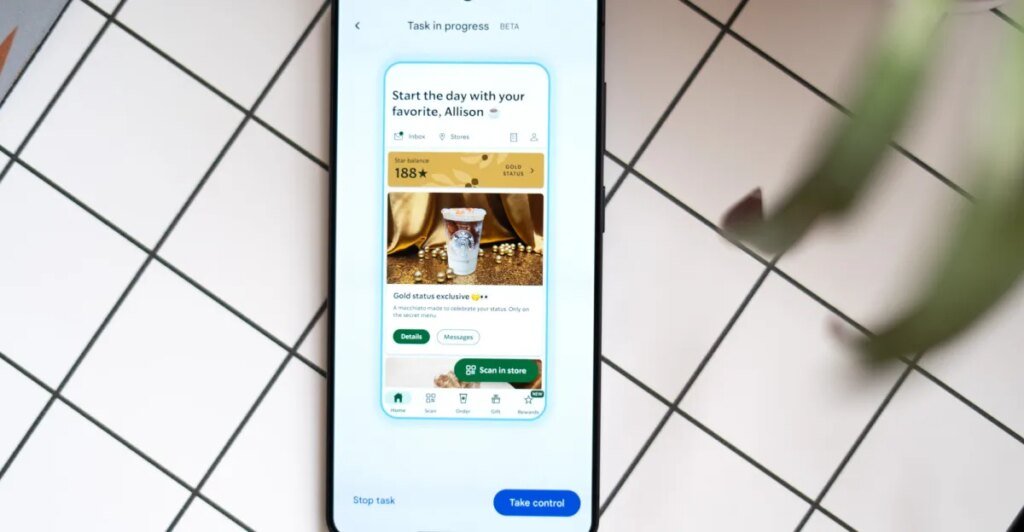

I’ve been testing out Gemini’s new job automation on the Pixel 10 Professional and the Galaxy S26 Extremely, which for the primary time lets Gemini take the wheel and use apps for you. It’s restricted to a small subset proper now — a handful of meals supply and rideshare providers — and it’s nonetheless in beta. It’s gradual, it’s clunky at instances, and it doesn’t clear up any major problem you had utilizing your telephone. Nevertheless it’s spectacular as hell, and I don’t assume it’s hyperbole to say this can be a glimpse of the long run. We’re nonetheless a good distance off, however that is the primary time I’ve seen a real AI assistant really engaged on a telephone — not in a keynote presentation or a rigorously managed demo inside a conference corridor.

First off: Gemini is far slower than you, or me, or most anybody at utilizing their telephone. If you might want to order an Uber proper this second, you’re nonetheless one of the best individual for the job. Earlier than you write it off, although, keep in mind that job automation is designed to run within the background whilst you do different issues in your telephone. Even higher, it retains working whilst you’re not your telephone, so you are able to do issues like examine that your passport is in your bag for the tenth time.

However when you’re curious, like I’m, you’ll be able to watch the entire thing occur. Whereas it’s working, textual content seems on the backside of the display indicating what Gemini is doing. Stuff like “Choosing a second portion of Rooster Teriyaki for the combo,” which it did once I directed it to order my dinner on Saturday evening. Watching Gemini determine issues out on the fly actually kinda guidelines. I requested for a hen combo plate; the menu introduced choices in half- portion increments, so it accurately added two half servings of hen.

Gemini found out that two half parts would equal one order of hen teriyaki.

Gemini had extra hassle discovering the aspect of greens featured proper in the midst of the display right here.

It’s for one of the best that whenever you begin an automation with Gemini, the default habits is for it to run within the background. It’s a must to faucet a button and open one other window if you wish to watch Gemini working by means of the duty. And it may be excruciating. Watching the pc attempt to discover a aspect of greens on a menu in Uber Eats when it’s sitting proper there on the high of the display is like watching a horror film and figuring out the assassin is within the closet proper subsequent to the protagonist. I imply, aside from the homicide half. Gemini made a few fallacious turns because it put collectively my teriyaki order, which it will definitely found out by itself, however the entire episode took about 9 minutes. Not superb.

Gemini is meant to hold out your job proper as much as the purpose the place it’s time to hit verify and order your automobile or dinner so you’ll be able to double-check its work. This, I feel, is the one sane method to make use of this characteristic proper now, and I don’t thoughts the added friction of finishing the order. Within the assessments I’ve run over the previous 5 days, I’ve by no means had it go rogue and end my order for me. And it’s surprisingly correct; I’ve needed to make only a few changes to the ultimate order. If it fails — which I’ve seen occur a few instances — it tends to be inside the first minute or two when one thing in regards to the app wants my consideration, like giving it permission to make use of my location, or altering the supply location to house somewhat than Nevada, which was the final place I used that app. I had to determine what the issue was in instances like this, however as soon as it was sorted out I used to be in a position to restart the automation with out a problem.

Right here’s the one that actually received me. I put an occasion on my calendar for a flight to San Francisco the next day (a fake journey for me, however actual flight particulars). I gave Gemini a obscure immediate to schedule an Uber that will get me to the airport in time for my flight tomorrow. As a result of Gemini has entry to my e-mail and calendar, it could go discover that data. It did want a bit additional steering — probably as a result of the flight wasn’t in my e-mail prefer it anticipated. However with that, it discovered the flight data, steered leaving by 11:30 or 11:45AM (logical timing for a 1:45PM flight given I dwell near the airport), and requested if I wished to schedule a journey for a kind of instances. I confirmed the time, and it went about organising the journey in about three minutes with no additional enter required on my half.

It’s a bit extra spectacular when you think about that Uber doesn’t even seek advice from it as scheduling a journey — you reserve a journey. That’s the important thing distinction between the digital assistants we’ve been utilizing and the AI assistants rising now. Having the ability to use pure language when speaking to the pc makes an enormous distinction whenever you’re controlling your sensible house or putting your dinner order. If the pc goes to get tripped up and ask for clarification whenever you overlook that the restaurant calls your meal a “plate” and never a “combo,” or when you ask for “slaw” as an alternative of “shredded cabbage,” then it’s no extra helpful than the assistants we’ve been utilizing for the previous decade to set timers and play music.

That mentioned, watching Gemini faucet and scroll round Uber Eats makes one factor painfully apparent: When you had been designing an utility for AI to make use of, it could look nothing like those we’ve got in the present day. You recognize, apps designed for people. An AI assistant gained’t be tempted by an enormous advert in the midst of a web page to save lots of 30 p.c in your order. An appetizing, well-staged photograph of the dish it’s ordering isn’t any extra convincing than a low-quality one. You’d give it a database, not a bunch of muddle to weed by means of — one thing the trade is working towards in Mannequin Context Protocol, or MCP.

An AI mannequin reasoning its method by means of a human-centric interface appears like probably the most impractical and brittle strategy to place a pizza order. It does hit a snag sometimes, and it’s not nice at telling you why it couldn’t do one thing. This model of job automation appears like a stopgap till app builders undertake extra sturdy strategies: MCP or Android’s app capabilities. Google’s head of Android, Sameer Samat, advised me just lately that Gemini takes the reasoning strategy within the absence of the opposite two. Perhaps this model of job automation is our preview of what’s attainable, or a strategy to prod builders into adopting one of many different strategies. Both method, this appears like a notable first step towards a brand new method of utilizing our cell assistants — awkward, gradual, however very promising.

Images by Allison Johnson / The Verge

Observe matters and authors from this story to see extra like this in your personalised homepage feed and to obtain e-mail updates.

- AIShut

AI

Posts from this subject can be added to your each day e-mail digest and your homepage feed.

ObserveObserve

See All AI

- GoogleShut

Google

Posts from this subject can be added to your each day e-mail digest and your homepage feed.

ObserveObserve

See All Google

- Palms-onShut

Palms-on

Posts from this subject can be added to your each day e-mail digest and your homepage feed.

ObserveObserve

See All Palms-on

- OpinionsShut

Opinions

Posts from this subject can be added to your each day e-mail digest and your homepage feed.

ObserveObserve

See All Opinions

- TechShut

Tech

Posts from this subject can be added to your each day e-mail digest and your homepage feed.

ObserveObserve

See All Tech