Native LLMs are nice for privateness; they run regionally and require no subscription. That’s, till you notice they undergo the identical issues their cloud counterparts do: extraordinarily restricted security guardrails. Security measures on-line are one factor, however when you’re working your AI mannequin regionally, bypassing them needs to be simple, proper?

Seems, it truly is. Should you’ve ever gone down the native LLM rabbit gap, you will need to have come throughout abliterated fashions. Native LLMs have already made cloud variations out of date for sure duties, and when you run them with out restrictions, you will by no means take a look at common fashions the identical method.

What abliterated LLMs truly are

Stripped-down fashions with their guardrails eliminated

Yadullah Abidi / MakeUseOf

AI fashions undergo a course of known as RLHF or bolstered studying from human suggestions earlier than launch. This course of trains the mannequin to refuse requests it deems dangerous or delicate. The mannequin learns a particular path in its activation area that teaches it what requests to refuse, therefore implementing security guardrails that forestall it from abuse when carried out in on-line providers.

However this refusal conduct is not scattered randomly throughout the mannequin’s weights; it is concentrated round a single, identifiable vector in its residual stream. Abliteration is the method of eradicating that vector, not by coaching or fine-tuning the coaching knowledge, however by mathematically re-aligning (known as orthogonalizing in technical phrases) the mannequin’s present weights in order that the refusal path merely can not work anymore.

In layman’s phrases, your commonplace LLM has a psychological exit ramp it may take each time confronted with a request deemed dangerous. Abliteration bodily removes that exit ramp from the map, which means the mannequin simply goes via your immediate anyway with none change within the coaching knowledge. There are already attention-grabbing methods to make use of a neighborhood LLM with MCP instruments, however utilizing an abliterated LLM opens totally new use circumstances that merely aren’t attainable with common fashions.

Choosing the proper mannequin issues

Not all native LLMs behave the identical

Screenshot by Yadullah Abidi | No Attribution Required.

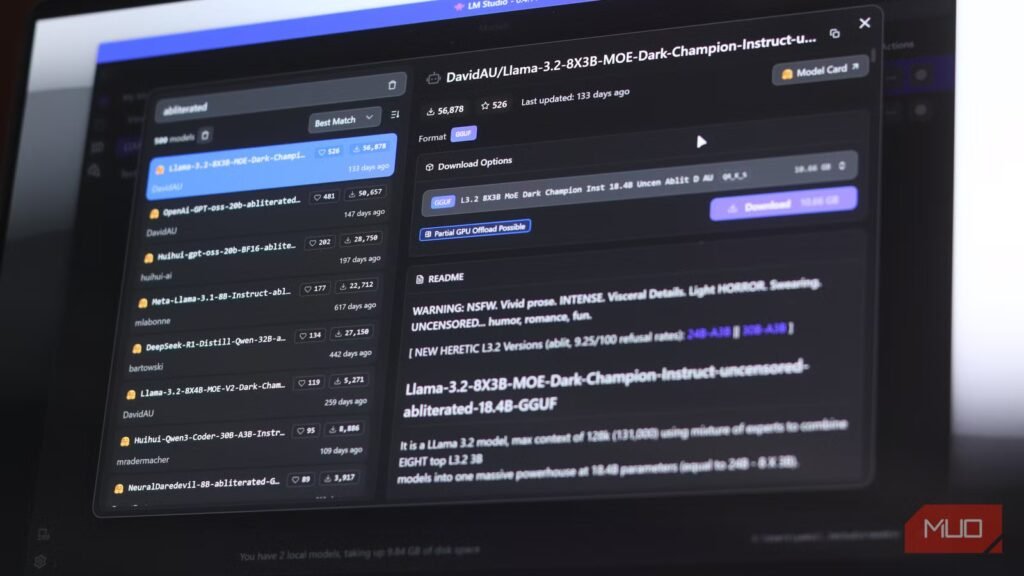

There are dozens of abliterated fashions floating round on Hugging Face you can obtain and take a look at, together with Llama, Qwen, Gemma, Mistral, and extra. I made a decision to check out mlabonne/Meta-Llama-3.1-8B-Instruct-abliterated, an 8B mannequin primarily based on Meta’s Llama 3.1 Instruct structure and the refusal path eliminated through weight orthogonalization. It runs comfortably in nearly any native LLM app, together with LM Studio and Ollama, on a mid-range machine with 8GB of VRAM, particularly in GGUF This autumn or Q5 quantization.

The rationale I picked this particular mannequin over options like Gemma or Qwen is reliability. Group testing of abliterated Gemma 3 fashions has been hit and miss, with testers on Reddit reporting nonsensical outputs and fashions that cease functioning after just a few tokens. The Llama 3.1 model has a way more secure popularity, masses cleanly utilizing the usual Llama 3 chat preset, and responds as anticipated the second you begin speaking to it.

Understand that abliterated fashions are totally different from conventional uncensored fashions just like the Dolphin collection. Dolphin achieves its openness via fine-tuning its coaching dataset—it is conditioned to not refuse. An abliterated mannequin would not have that coaching. As an alternative, the refusal mechanism has merely been eliminated on the weight stage after coaching.

Neither is strictly higher, although. Dolphin fashions are usually extra secure and polished for on a regular basis use, whereas abliterated fashions are nearer to the bottom character of the unique mannequin, simply with none restrictions.

My first dialog felt totally different

No filters, no nudges, simply uncooked responses

Loading an abliterated mannequin is not any totally different out of your common one. Identical interface, identical context window, identical stream of tokens showing on the display screen. However whenever you ask one thing that may ship a daily mannequin scrambling for its security measures, an abliterated one merely responds.

For instance, when requested methods to hack a Wi-Fi community, the common Llama 3.1 merely mentioned that it could not assist me. The ablated model, nonetheless, responded with an inventory of strategies I may use, the instruments I would wish, and a step-by-step information to comply with—all whereas nonetheless giving a warning that hacking a Wi-Fi community is illegitimate with out permission, and I ought to receive consent earlier than trying an assault.

The tonal shift is tough to explain till you expertise it for your self. Most consumer-grade LLMs have a really particular conversational tone. It is barely formal, and rapidly will get nervous everytime you’re getting near a delicate subject. Even when they do assist, you will be served loads of warnings and caveats to attempt to persuade you to not comply with via with the duty. An abliterated mannequin has none of that. The dialog flows in another way within the sense that it truly feels such as you’re bouncing concepts off somebody who’s truly engaged, as an alternative of speaking to a toned-down, customer support chatbot.

You’ll be able to go locations different fashions gained’t

The liberty and the dangers of no restrictions

Screenshot by Yadullah Abidi | No Attribution Required.

Aside from eradicating their security guardrails, abliterated fashions typically have a completely totally different high quality to their conversations. That reveals in a couple of method.

For starters, commonplace instruction fashions deplete cognitive cycles as a result of the mannequin is consistently self-monitoring. Each response subtly displays a mannequin that’s concurrently making an attempt to reply you and making an attempt to not say something mistaken. This twin object can bleed into the mannequin’s tone, sentence construction, and general confidence.

The abliterated mannequin, nonetheless, would not second-guess itself. For instance, once I requested it to put in writing a morally ambiguous fictional character, it simply wrote one, with out softening the character later to make it extra regular. If one thing is unhealthy, the mannequin clearly tells you so. It would not play at diplomacy or account for what others would possibly assume. Should you ask for an evaluation, you get an sincere one, with out the mannequin making an attempt to play each side.

The tradeoff right here is that abliteration may cause a dip in benchmark efficiency. MMLU scores, reasoning duties, and coherence on advanced agentic workflows can take successful. The Llama 3.1 8B abliterated mannequin I examined addresses this through a subsequent DPI fine-tune cross that recovers misplaced efficiency, however you are still shedding out in comparison with a daily LLM.

In layman’s phrases, in comparison with a full mannequin, abliterated LLMs can neglect directions mid-way, wrestle with multi-step reasoning, lose context rapidly, fail constraint-heavy prompts, and hallucinate extra typically. Should you’re letting your AI mannequin run free, you’ll have to give in to its biases and weaknesses as nicely.

So is it truly price it?

When native, unfiltered AI is smart (and when it doesn’t)

Abliterated fashions aren’t for everybody, they usually’re not making an attempt to be both. They’re for individuals who need a native assistant that operates with full belief and no parental controls, editorial interventions, and security guardrails deciding what you are allowed to ask within the privateness of your personal machine.

Associated

I now use this offline AI assistant as an alternative of cloud chatbots

Even with cloud-based chatbots, I will all the time use this offline AI assistant I discovered.

For researchers, writers, and builders constructing instruments that want direct solutions, or anybody merely uninterested in the relentless warning that mainstream AI instruments train, an ablitreated mannequin is the way in which to go. For every part else, run-of-the-mill AI fashions and instruments nonetheless reign supreme.

That mentioned, as soon as you’ve got talked to an abliterated mannequin, going again to a normal mannequin will really feel limiting, despite the fact that the latter would possibly technically be higher. You will get the reply, simply not the mannequin’s character.