Everybody has an opinion about the most effective AI chat. Most opinions, sadly, are based mostly on vibes, company-written benchmarks, or whichever mannequin impressed somebody that day. I needed a greater method to take a look at main chatbots in opposition to my actual work, free from my very own assumptions.

It seems that the instrument already exists, it is free, and it modified which AI chatbot I really attain for every single day.

Associated

No single chatbot is sufficient, however this stack covers every thing

Your favourite AI chatbot isn’t flawless.

Why most “greatest AI chatbot” lists do not really aid you

Your duties are completely different from their benchmarks

Credit score: Bryan M. Wolfe / MakeUseOf

Most AI chatbot comparisons take a look at the identical issues: write a poem, clarify quantum physics, or resolve a math downside. These varieties of prompts present common functionality however let you know little about whether or not a mannequin suits your wants.

I write about know-how. My actual duties are drafting article sections, summarizing analysis, rephrasing awkward sentences, and producing fast code snippets. Benchmarks on artistic writing or superior calculus do not assist me.

I wanted a method to take a look at prime fashions on my duties, ideally with out figuring out which mannequin I used. Bias is actual. If I do know it is Claude or ChatGPT, I might subconsciously grade on a curve.

How Chatbot Area checks AI fashions with out bias

What makes blind testing extra dependable than evaluations

Credit score: Bryan M. Wolfe / MakeUseOf

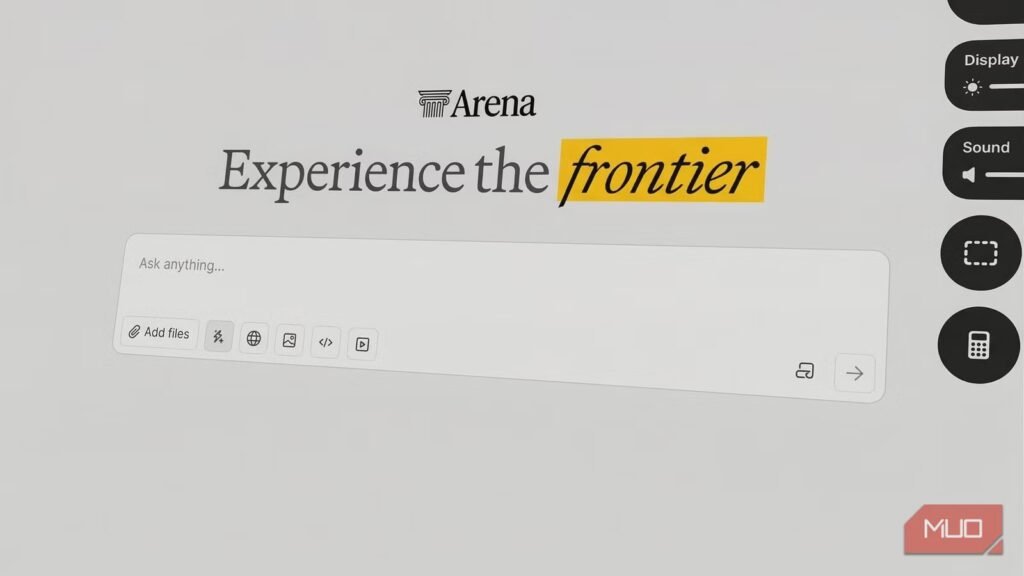

Chatbot Area from UC Berkeley removes the advertising and marketing annoyances. Kind a immediate and get two nameless AI responses facet by facet. Choose the winner. After voting, the platform reveals which fashions you in contrast.

The blind testing is the entire sport. You may’t favor a model you have paid for or a mannequin you have already determined is greatest. You are simply evaluating two responses to your precise immediate and choosing the higher one.

The platform tracks votes from hundreds of thousands of customers and ranks fashions utilizing an Elo-style system, like chess. However the world leaderboard is not what issues. Run your individual duties and see which mannequin wins for you.

It is free, no account is required to get began, and it takes about half-hour to get a real learn on which mannequin suits your workflow.

Developer

Anthropic PBC

Worth mannequin

Free, subscription obtainable

I ran 40 blind AI match ups on actual writing duties: this is what occurred

The 4 process classes I used (and why they matter)

Credit score: Bryan M. Wolfe / MakeUseOf

I ran 4 process classes I encounter in actual work each week, with 10 match ups per class:

- Writing and modifying: Tough draft paragraphs submitted for tightening.

- Analysis summarization: Lengthy blocks of technical textual content submitted for clear, reader-friendly summaries.

- Headline and title era: Given an article subject and angle, which mannequin produced essentially the most compelling choices?

- Explaining sophisticated matters merely: Take one thing technical and make it comprehensible with out dumbing it down. A core talent for anybody writing about tech.

I tracked my outcomes manually after every reveal, a easy working tally by class; 40 rounds whole.

Throughout all 4 duties, Claude got here out on prime. Forty rounds of blind testing, and one mannequin constantly produced responses I most popular earlier than I knew who wrote them. That is about as clear a consequence as this type of testing can produce.

Gemini 3.1 Professional Preview was a reputable second. It completed behind the ever-evolving Claude general, however was the one mannequin that usually challenged it. In just a few writing and summarization match ups, the hole was slender sufficient that I hesitated earlier than voting. When you’re a heavy Gemini person, you are not making a nasty selection; you are simply not making the most effective one, no less than for writing-adjacent duties.

I anticipated the coding outcomes to be nearer, however Claude’s responses have been cleaner, higher structured, and labored on the primary attempt with out fixes for “hallucinated” libraries or damaged syntax.

The most important shock? The “massive names” did not all the time maintain up. Even with GPT-4o included, solely Claude and Gemini constantly led. Others typically did nicely, however these two outperformed on my skilled duties.

The right way to discover the most effective AI chatbot in your particular duties

The 4 guidelines that make your outcomes really significant

Credit score: Bryan M. Wolfe / MakeUseOf

Go to lmarena.ai and use prompts from precise work. Do not depend on hypotheticals. As an alternative, paste in an actual e mail it’s good to rewrite, an actual doc to summarize, or an actual downside to unravel.

Run quantity: Goal for no less than eight to 10 match ups per process sort earlier than deciding. Single match ups are noisy; patterns seem with quantity.

Observe manually: Preserve a easy working tally utilizing one thing like Apple Notes, a sticky be aware, no matter. Chatbot Area would not generate a private outcomes abstract, so guide logging is the best way to go.

Belief the hesitation: Discover whenever you hesitate earlier than voting. This often means the fashions are shut on that process, helpful even with no clear winner.

Claude Opus 4.6 gained, however the actual winner is the tactic

Whereas world rankings can generate debate, it is your private experimentation that determines which AI mannequin really maximizes your productiveness.

Forty blind match ups with my actual work gave me a transparent reply: Claude Opus 4.6 performs greatest for my precise duties. Not as a result of I anticipated it to win — I did not, however as a result of it constantly outperformed earlier than the reveal.

Nevertheless, there’s one main caveat: velocity. Within the AI world, the “greatest” mannequin is a shifting goal. These fashions are always up to date; what leads the pack as we speak is likely to be out of date in 30 days and even six months. At present, Claude is my winner, however the great thing about Chatbot Area is that I can rerun this complete experiment subsequent month to see whether or not a brand new Gemini replace or a shock GPT launch has taken the lead.

Finally, blind testing is not nearly validation. It is about staying open to discovering new preferences as fashions evolve.