Whenever you pay for a subscription each month, you anticipate the service to work flawlessly. And whenever you’re working with AI instruments, hitting a charge restrict mid-work may be fairly a irritating expertise. To not point out that each one your work, and any delicate information or paperwork you’re employed with, are being despatched over to an unknown server.

Fortunately, there are tons of apps you should utilize to take pleasure in native LLMs. Native LLMs have additionally come a great distance, to the purpose the place you possibly can run light-weight AI fashions on nearly each machine. They are not good at every little thing, however they do some duties so effectively you’d need to cancel that cloud AI subscription straight away.

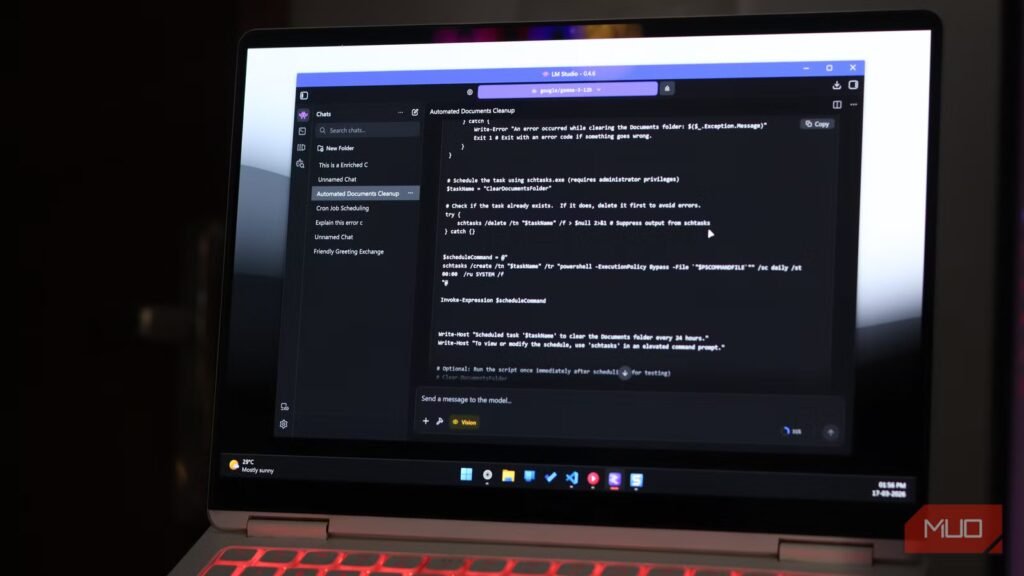

Writing shell scripts with out googling each command

Turning plain English into working bash scripts

That is what I exploit my native LLMs for most frequently. Describing a plain and repetitive system job in plain English and getting a working Bash or Python script again in seconds saves much more time than you’d think about. It is good for spinning up fast scripts to rename a number of information, compressing and transferring folders, or automating fundamental system upkeep. AI fashions also can clarify what every flag and argument does, which means they’re additionally nice for any command-line instruments that you just’re aware of however do not essentially know the best way to use.

Moreover, when these scripts contact your file system, listing construction, cron jobs, or inner server paths, none of that context ever leaves your machine, which is an enormous deal in case you care about how a lot your instruments see of your setup. Describing these instructions or duties to a cloud AI can expose file paths, naming conventions, and even hints of your server topology. A neighborhood mannequin sees the identical info, and it by no means leaves your system.

Summarizing delicate information with out sending them wherever

Holding personal paperwork actually personal

Screenshot by Yadullah Abidi | No Attribution Required.

One other fairly compelling use for a neighborhood LLM is summarizing personal paperwork. You do not have to feed a contract, a confidential report from work, medical information, or your private finance statements into a web based server. Cloud AI methods, no matter their privateness insurance policies, contain your information leaving your machine and being processed on exterior infrastructure. Native AI eliminates that danger solely.

Instruments like Ollama paired with LangChain can create complete personal doc summarization pipelines that run solely in your {hardware}. You level the mannequin to a PDF, it reads and summarizes it, and at no level does that content material contact a third-party server. For anybody working with issues about information sensitivity, this can be a non-negotiable benefit.

Offline coding assist that understands your setup

Debugging with out web (and with out limits)

Yadullah Abidi / MakeUseOfCredit: Yadullah Abidi / MakeUseOf

Coding with AI instruments is all the time a danger, particularly in case you’re working with inner APIs, buyer information dealing with, or proprietary logic. You would not need to ship authentic, delicate code to a 3rd celebration’s server and even infrastructure for that matter. It is tremendous for fanatics or interest initiatives, but it surely begins wanting reckless for something commercially delicate.

The answer is to easily make a neighborhood coding AI of your individual. I’ve already constructed a neighborhood coding AI for VS Code for myself, and it is shockingly good. It may not be as quick as cloud-based AI companies, however relying in your {hardware} and the mannequin you are utilizing, native AI coding assistants can come fairly shut. Since there is no community visitors leaping backwards and forwards between servers, the line-by-line completions additionally really feel a lot snappier. For the majority of on a regular basis coding duties—writing utility capabilities, debugging stack timber, producing boilerplate code, or explaining unfamiliar library syntax, a neighborhood coding AI can work out nice.

Turning messy conferences into clear, usable notes

No uploads, no delays, no awkward privateness issues

Similar to you would not need proprietary code going by way of a third-party’s server infrastructure, you would not need your conferences and work conversations to go there both. Fortunately, you possibly can simply put collectively a neighborhood AI transcription and summarization pipeline constructed round instruments like Whisper for speech-to-text and a neighborhood LLM for abstract technology.

Thoughts you that it does take a little bit of setup, but it surely runs effectively on most consumer-grade {hardware}. The result’s a workflow the place nothing leaves your management. The summarization high quality utilizing a map-reduced chunking method, which primarily means breaking down lengthy transcripts into smaller items, summarizing every, after which combining, takes effectively beneath 10 seconds for many paperwork and transcripts, and is sweet sufficient for inner use. It additionally considerably reduces note-taking time.

A private assistant that by no means wants an web connection

Fast solutions with out charge limits or logins

Yadullah Abidi / MakeUseOfCredit: Yadullah Abidi / MakeUseOf

Numerous what we use cloud AI for each day are low-stakes questions or mundane duties that you just would not need to spend psychological power on. Asking AI to clarify error messages, decrypt Linux instructions, and rewrite emails are all duties that native LLMs can deal with simply as effectively, and there is no significant purpose for any of that to journey to a cloud server when a neighborhood mannequin can reply that simply as effectively in a couple of seconds. As soon as a neighborhood mannequin is working, the price of a question is actually zero—no subscription charges and no charge limits.

Operating fashions like Mistral 7B or Gemma by way of LM Studio or Ollama provides you a quick and protracted AI assistant that works offline, boots rapidly, and does not care what number of such questions you throw at it each day. For the routine productiveness use that accounts for many of our interactions with on-line AI instruments, that is greater than adequate.

Native AI is not good, but it surely’s extra succesful than you already know

None of this implies native LLMs are universally higher. For extra advanced reasoning duties, cutting-edge code technology, working with very massive contexts, or accessing the web, cloud fashions nonetheless have an actual edge. {Hardware} necessities are additionally a consideration. You may not want a beefy GPU to run AI fashions, however in case you’re doing demanding work, you are going to want the {hardware} to assist an AI mannequin highly effective sufficient.

Associated

I’ll by no means pay for AI once more

AI doesn’t must price you a dime—native fashions are quick, personal, and at last price switching to.

That mentioned, the worth proposition has shifted. As open-source fashions like Llama, Mistral, and DeekSeek mature, the standard hole with cloud AI continues to get narrower, a minimum of for fundamental, each day duties. What you get in return—full management of your information, zero recurring prices, offline functionality, and nil charge limits—is a commerce effectively well worth the effort.