I’ve been getting actually into native LLMs these days, and I’ve even constructed my very own native AI server. The issue is that it’s an especially costly interest, and I should not have hundreds of {dollars} in {hardware} mendacity round to scratch that itch correctly.

However I nonetheless discover myself eager to check out the most important open-weight fashions, and I feel I’ve discovered a fairly good answer to that.

Associated

I’ll by no means pay for AI once more

AI doesn’t need to price you a dime—native fashions are quick, personal, and eventually price switching to.

Nvidia Construct enables you to run open-weight fashions your {hardware} cannot

Inference totally free

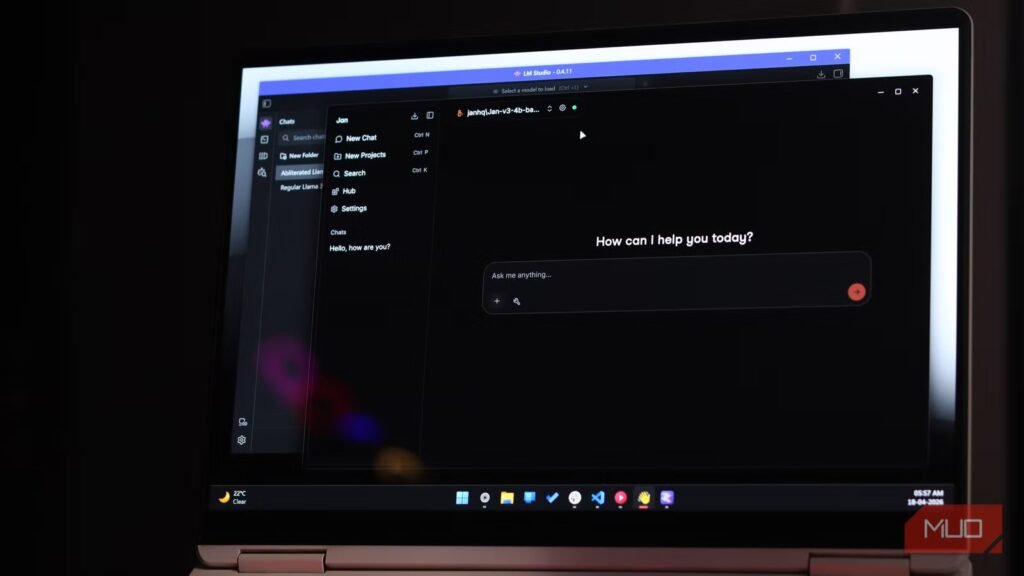

Screenshot by Raghav -NAR

Nvidia Construct is mainly Nvidia’s cloud inference platform for operating native LLMs. With out complicating an excessive amount of, Nvidia Construct mainly takes open-weight fashions, optimizes them to run on their very own DGX Cloud {hardware}, and offers you API entry to them.

To get began, simply head over to the Nvidia Construct web site and create a brand new account. After getting completed that, I might suggest going by means of the catalog and on the lookout for fashions with the Free Endpoint flag on them. These are those you need to use with out paying something. I couldn’t discover any official documentation on token limits for the free tier, however there’s a charge restrict of 40 requests per minute, which is unlikely to hassle you for private use.

If you’re unsure which mannequin to start out with, particularly for coding duties, I might counsel attempting MiniMax M2.7 first. You may as well strive others, like GLM-4.7, although remember the fact that some mannequin households solely have their older variations accessible on the free tier.

Associated

You may get a free Gemini API key proper now with no billing required — here is what to do with it

I grabbed the free key out of curiosity and instantly had concepts.

The important thing factor to know is that these should not toy fashions or stripped-down demo variations. MiniMax M2.7 is a 230 billion parameter mannequin. There isn’t a sensible strategy to run that at dwelling until you’ve gotten a multi-GPU server with lots of of gigabytes of VRAM. Nvidia is internet hosting the entire thing, by itself infrastructure.

After getting settled on a mannequin, click on the profile icon on the highest proper, head to API Keys, and generate one. After getting that, you can begin doing a little genuinely cool stuff with it.

You are able to do a ton of cool stuff with it

No GPU no drawback

I’ve been utilizing Claude Code for some time now by pointing it at open-weight fashions, however just lately I’ve been taking part in round with OpenCode, so I made a decision to present {that a} shot with Nvidia Construct as an alternative. The great factor is OpenCode has Nvidia’s integration constructed proper in, so I simply needed to run /join, choose NVIDIA, enter my API key, and that was it. Inside 30 seconds I used to be up and operating on MiniMax M2.7, and it was very spectacular, particularly contemplating it prices nothing.

However that’s not all you are able to do with it. Each mannequin on Nvidia Construct exposes an OpenAI-compatible API endpoint, and that issues greater than it’d sound. The OpenAI API format has mainly change into the common normal for AI tooling at this level. Coding harnesses like Cursor and Zed help it as nicely.

Even Claude Code works, although it wants somewhat extra configuration than OpenCode because it speaks Anthropic’s format natively somewhat than OpenAI’s. If you wish to get it up and operating, Nvidia has a help web page for that as nicely.

For those who simply wish to use a mannequin as a chatbot, native chat interfaces like Open WebUI, which helps you to run a ChatGPT-style interface by yourself machine, may be pointed at it simply as simply.

It is the best strategy to strive open-weight fashions with out spending hundreds

Reminiscence? On this financial system?

Raghav Sethi/MakeUseOf

Operating massive fashions domestically is genuinely interesting. You get full management, no charge limits, no knowledge leaving your machine. I perceive why individuals need it. However the {hardware} required to do it correctly will not be low cost, and the numbers catch lots of people off guard.

To run a 70B mannequin comfortably on a single machine, you’re looking at one thing like a Mac with 128GB of unified reminiscence, which begins round $5,000 (though it’s also possible to get acceptable outcomes with 64GB). Or a twin RTX 5090 setup, which is able to run you someplace between $9,000 and $12,000 when you consider the remainder of the construct.

As of the time of writing, Apple has eliminated the 128GB Mac Studio configuration. If you’re after pure reminiscence, your finest guess proper now could be the M5 Max MacBook Professional with 128GB of unified reminiscence.

And even then, a 70B mannequin will not be the most important factor in Nvidia Construct. MiniMax M2.7 is a 230 billion parameter mannequin. There isn’t a sensible client {hardware} path to operating that at full precision at dwelling.

The issue with leaping straight to purchasing {hardware} is that you simply may do it earlier than you truly know what you want. Spending that form of cash after which realizing the fashions that match in your machine should not fairly ok for the duty you had in thoughts is a painful state of affairs to be in. Or the alternative: you spend far more than mandatory as a result of you weren’t positive the place your necessities truly land.

You may spend a number of weeks utilizing the most important open-weight fashions at full precision, on actual duties you truly care about, earlier than you decide to something. For those who discover {that a} 70B mannequin does every little thing you want, perhaps a Mac with 128GB will get you there.

If smaller fashions turn into ok, you won’t must spend almost as a lot. And if you end up truly needing one thing within the 200B vary commonly, now you already know that too, earlier than you’ve gotten spent something.

Associated

I attempted operating a chatbot on my outdated pc {hardware} and it truly labored

You need not fork out for costly {hardware} to run an AI in your PC.

It isn’t a long run answer

Nvidia Construct will not be a substitute for operating fashions domestically. You’re nonetheless sending your knowledge to another person’s servers; you might be nonetheless rate-limited, and also you should not have the form of management that makes native inference price organising within the first place. However that can be not likely the purpose.

Consider it as a testing floor. A strategy to truly use these fashions for actual duties earlier than you decide to something. Nvidia Construct simply occurs to be the best and least expensive strategy to get that info proper now.