New emergency contact options at the moment are out there in ChatGPT, the place the AI chatbot will notify somebody you already know and belief in the event you’re having an unsafe dialog, equivalent to discussing self-harm or suicide.

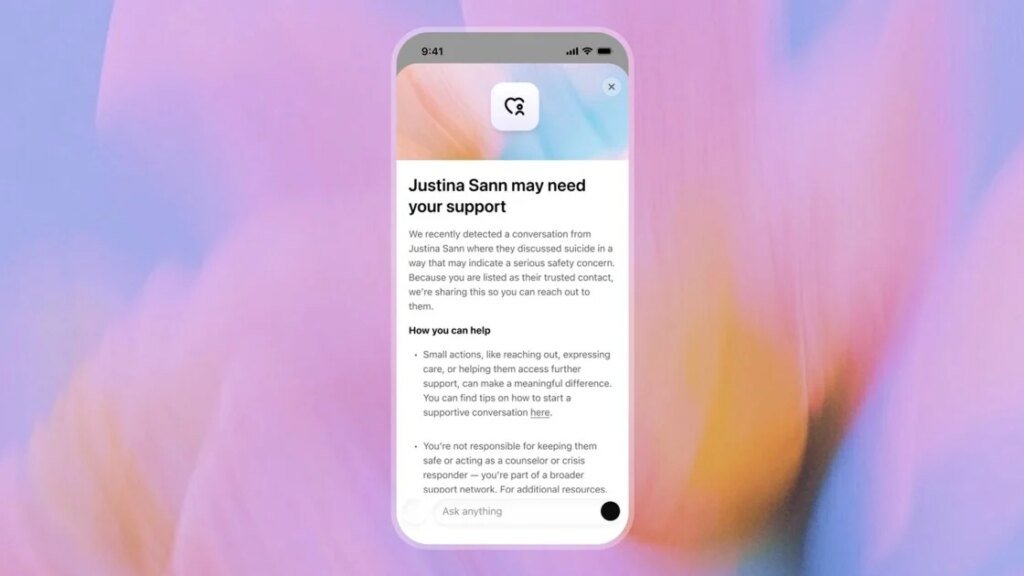

The characteristic is named “Trusted Contact,” and it’s an optionally available security software that connects your account with one other grownup and notifies them when there are indicators of psychological well being difficulties in your ChatGPT interactions. OpenAI says, “Trusted Contact helps join you to an individual you belief in moments of emotional disaster.”

It is advisable to arrange a contact manually, so customers will doubtless accomplish that provided that they’ve considerations about their very own psychological well being or somebody they know. It is advisable to choose an grownup who has a ChatGPT account, or would not thoughts setting one up.

Inside ChatGPT’s settings, you possibly can select your contact and ship them an invite via e mail or cellphone quantity, which they need to settle for inside per week to grow to be energetic. If they do not settle for, you possibly can change to asking one other grownup, however you possibly can solely have one by one.

OpenAI says, “If our automated monitoring methods detect the consumer could also be speaking about self-harm in a means that signifies a critical security concern, ChatGPT lets the consumer know that we might notify their Trusted Contact, and encourages the consumer to succeed in out to their Trusted Contact with recommended dialog starters.”

(Credit score: OpenAI)

It says notifications despatched to the “Trusted Contact” round your conversations are restricted, giving a generalized cause why self-harm got here up within the chat, moderately than an in depth transcript of your messages.

This new software sits alongside ChatGPT’s different processes, so inner reviewers may even evaluation conditions when OpenAI’s methods deem it essential. ChatGPT may even proceed to floor instruments to encourage customers to hunt their very own real-world assist, equivalent to solutions for emergency companies or localized disaster helplines, when discussing unsafe matters.

Really useful by Our Editors

OpenAI additionally reaffirms it gained’t share directions for self-harm when a consumer requests them. In October 2025, OpenAI mentioned its analysis confirmed that round 1.2 million customers every week talked to ChatGPT about suicide or comparable matters.

These new options are much like an possibility beforehand out there in ChatGPT’s parental controls. That first launched after a 16-year-old reportedly spoke to the chatbot round self hurt earlier than later taking his personal life. OpenAI claims it is not chargeable for the teenager’s dying throughout an ongoing lawsuit with the household.

Disclosure: Ziff Davis, PCMag’s mum or dad firm, filed a lawsuit in opposition to OpenAI in April 2025, alleging it infringed Ziff Davis copyrights in coaching and working its AI methods.

About Our Knowledgeable

Expertise

I’ve been a journalist for over a decade after getting my begin in tech reporting again in 2013. I joined PCMag in 2025, the place I cowl the most recent developments throughout the tech sphere, writing concerning the devices and companies you employ daily. Make sure you ship me any suggestions you assume PCMag can be fascinated with.

Learn Full Bio