if proxy_alive():

print(“n[10] Combined 10-prompt workload…”)

workload = [

“Capital of France?”,

“Read foo.py”,

“Type hint for a list of dicts”,

“Lowercase: HELLO”,

“One-sentence summary of REST”,

“Refactor a callback chain into async/await with proper error handling”,

“Design a sharded multi-region key-value store with linearizable reads”,

“Analyze the asymptotic complexity of this code and prove the bound rigorously”,

“Debug why our gRPC stream stalls when the client TCP window saturates”,

“Compare and contrast B-trees and LSM-trees for write-heavy workloads”,

]

runs = []

consumer = OpenAI(base_url=f”http://localhost:{PORT}/v1″, api_key=”native”)

for p in workload:

t0 = time.time()

attempt:

r = consumer.chat.completions.create(

mannequin=”auto”,

messages=[{“role”: “user”, “content”: p}],

max_tokens=140,

)

utilization = getattr(r, “utilization”, None)

runs.append({

“immediate”: p[:55],

“mannequin”: r.mannequin,

“latency_s”: spherical(time.time() – t0, 2),

“in_tok”: getattr(utilization, “prompt_tokens”, 0) if utilization else 0,

“out_tok”: getattr(utilization, “completion_tokens”, 0) if utilization else 0,

})

besides Exception as e:

runs.append({“immediate”: p[:55], “mannequin”: “ERROR”,

“latency_s”: None, “in_tok”: 0, “out_tok”: 0,

“error”: str(e)[:80]})

rdf = pd.DataFrame(runs)

print(rdf.to_string(index=False))

PRICE = {

“flash”: {“in”: 0.30 / 1e6, “out”: 2.50 / 1e6},

“professional”: {“in”: 1.25 / 1e6, “out”: 10.0 / 1e6},

}

def price_for(model_str, in_t, out_t):

m = (model_str or “”).decrease()

tier = “flash” if “flash” in m else “professional”

return in_t * PRICE[tier][“in”] + out_t * PRICE[tier][“out”]

cost_routed = sum(price_for(r[“model”], r[“in_tok”], r[“out_tok”]) for r in runs)

cost_no_route = sum(price_for(“gemini-2.5-pro”, r[“in_tok”], r[“out_tok”]) for r in runs)

print(f”n[10] Value (NadirClaw routed) : ${cost_routed:.6f}”)

print(f” Value (always-Professional baseline) : ${cost_no_route:.6f}”)

if cost_no_route > 0:

print(f” Estimated financial savings on this run : ”

f”{(1 – cost_routed/cost_no_route) * 100:.1f}%”)

print(“n[11] `nadirclaw report` (parses the JSONL request log):”)

rep = subprocess.run([“nadirclaw”, “report”], capture_output=True, textual content=True, timeout=60)

print(rep.stdout or rep.stderr)

if proxy_alive():

print(“n[12] Stopping the proxy…”)

attempt:

if hasattr(os, “killpg”):

os.killpg(os.getpgid(server_proc.pid), sign.SIGTERM)

else:

server_proc.terminate()

server_proc.wait(timeout=10)

besides Exception:

attempt:

server_proc.kill()

besides Exception:

move

print(” ✓ proxy stopped.”)

print(“nDone. 🎉”)

Trending

- Cease renting your instruments and personal a professional coding setup with MS Visible Studio for lower than $35

- Prepare for the whisper-filled workplace of the long run

- 4 low-cost devices to make your kitchen smarter with out transforming something

- As we speak’s NYT Strands Hints, Reply and Assist for Could 11 #799

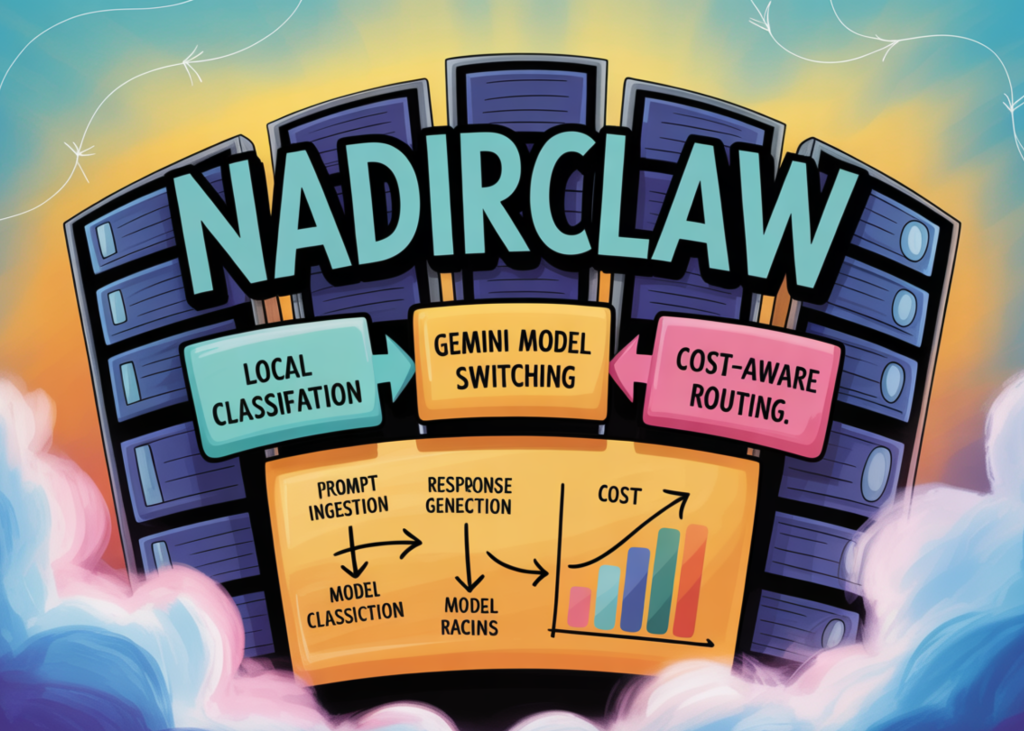

- Construct a Value-Conscious LLM Routing System with NadirClaw Utilizing Native Immediate Classification and Gemini Mannequin Switching

- Trump calls Iran’s response to peace plan ‘completely unacceptable’ as ceasefire frays | US-Israel battle on Iran

- Your Pixel has a hidden emergency characteristic you need to take a look at proper now

- I assumed I wanted an iPhone Professional till I paid consideration to how I truly use it