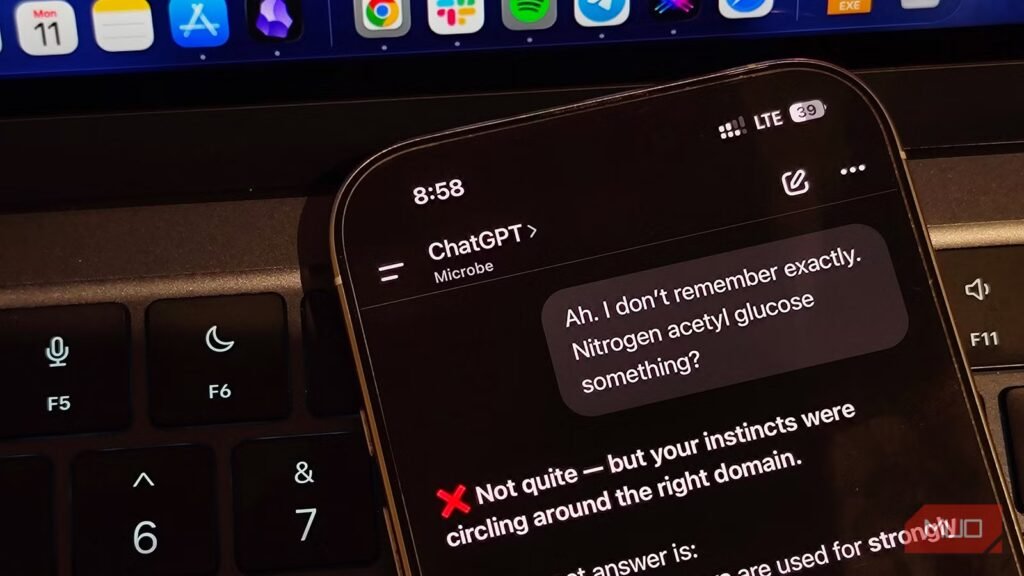

Massive Language Fashions are nice at faking confidence. You possibly can ask ChatGPT, Gemini, or Claude nearly every little thing within the solar, and generally, you may get a well-structured, confident-sounding reply straight away. Nonetheless, simply because your mannequin sounds assured, it does not essentially imply it is proper.

We’re all too aware of LLM hallucinations — the mannequin casually invents a quote, cites sources that do not exist, or will get dates fallacious. You would possibly assume that AI hallucinations are a factor of the previous and that fashionable fashions do not hallucinate as a lot, however that is not the total fact. Sure sorts of requests can ship your LLM over the sting, they usually present up extra usually in your every day prompts than you would possibly assume.

Associated

I constructed one ChatGPT immediate that works for completely any situation

This straightforward ChatGPT prompting construction works for any purpose, large or small.

Ask it to do math, and issues collapse

Your favourite AI is not nearly as good at math as you assume it’s

LLMs are constructed to research and generate textual content, to not calculate. This can be a core design selection that applies to nearly each LLM on the market, and whereas this works nice for prose and on a regular basis communication, it isn’t so nice with numbers.

Open Useful resource Utility’s April 2026 examine benchmarked mathematical calculations towards Omni-MATH to seek out that the typical accuracy throughout fashions sits at simply 0.3861, with GPT-5 mini main the class. In easy phrases, about two out of each three math issues will be partially or fully hallucinated.

You see, the AI mannequin is not fixing the equation the way in which your calculator does. It is predicting what tokens are statistically more likely to present up subsequent. As talked about earlier than, that works for prose and pure language, however not for arithmetic. For those who’re asking an LLM to do something past probably the most primary arithmetic, assume the consequence wants checking. That AI software fixing advanced math issues is probably not fallacious, however you’ll be able to’t be certain it is proper.

OS

Android, iOS, Net

Developer

OpenAI

Value mannequin

Free with non-compulsory subscription

Incomplete information results in assured nonsense

Evaluation usually breaks as a result of context is lacking

Knowledge evaluation appears the apparent discipline the place an AI mannequin ought to excel. In any case, inspecting, formatting, and remodeling information is sort of a structured, rule-based process. Seems, you may solely get the best reply on one of these process 52.2% of the time, in line with the GPQA-based scores within the examine. This time, although, your finest wager is Gemini 3 Professional, which additionally turned out to be the most effective mannequin to do every day duties, scoring the best in 4 out of the 5 duties they examined. Pairing Gemini with NotebookLM can considerably enhance your analysis studies, too.

In any case, the reason for this is identical as that of AI fashions being dangerous at arithmetic. LLMs prioritize guessing the following logical token fairly than truly processing and calculating the worth. Meaning when a dataset is incomplete or ambiguous, the fashions fill the hole with what it thinks ought to go there in comparison with the correct, calculated worth.

Don’t deal with it like an knowledgeable

AI shouldn’t exchange academics, docs, or recommendation

Credit score: Gavin Phillips / MakeUseOf

Tutoring is without doubt one of the extra widespread makes use of of AI presently, however analysis suggests in any other case. Assessments on teaching-style duties measured towards MMLU-Professional present solely 0.67 out of 1 on accuracy. Now, a 67% accuracy would possibly sound tremendous till you understand that it basically means that each one of three explanations an AI provides you may be fallacious. So leaning on it for a fairly sophisticated homework may not be the best choice. If you need to, Open Useful resource Utility suggests Gemini 3 Professional.

Well being-related questions additionally fall underneath the identical bracket. LLMs are normally able to summarizing common data from the net, however a single outdated or unreliable supply is all it takes to make their in any other case confident-sounding rationalization fallacious. A hallucinated dosage, a made-up situation, or a fallacious exercise cue is usually a well being danger. For something that impacts your physique, health, magnificence, and total self-care, let a certified skilled make the best name.

OS

Android

Developer

Value mannequin

Subscription

Citations break LLMs badly

Fabricated sources and hyperlinks that look convincing

Credit score: Amir Bohlooli / MUO

Final however not least, AI fashions are likely to invent data as a substitute of admitting they can’t discover it. Particular data queries additionally averaged 0.67 out of 1 on the MMLU-Professional take a look at. So whenever you ask a few area of interest matter with fewer or incomplete sources, the mannequin tends to foretell the reply fairly than admit they do not know.

A quotation is the worst potential goal for the sort of guesswork that AIs do as a result of it must be precisely proper. Whether or not you are asking for creator names, 12 months, journal, quantity, situation, web page vary, or any particular information level — which normally go in tutorial or journalist studies — a believable-sounding faux from an AI mannequin remains to be a faux.

Belief, however all the time confirm

So what does all of this imply? For starters, it doesn’t suggest you must hop on the “AI is ineffective” bandwagon, however it is advisable to cease assuming no matter your AI mannequin instructed you is true. AI works finest when the duty at hand is open-ended, creating, or language-focused, the place you do not have a single right reply. Something that requires it to be exact and objectively right, like math, information evaluation, educating, well being, and particular fact-checking, has the tendency to make these fashions hallucinate extra.

Associated

No single chatbot is sufficient, however this stack covers every little thing

Your favourite AI chatbot isn’t flawless.

You possibly can’t begin avoiding these AI instruments, particularly contemplating they’re among the finest ones round. Nonetheless, realizing which jobs at hand them and which of them to maintain in human fingers is what makes the distinction. When the stakes are actual, and accuracy is required, an actual knowledgeable remains to be the best selection.