Scaling giant language fashions (LLMs) is pricey. Each token processed throughout inference and each gradient computed throughout coaching flows by feedforward layers that account for over two-thirds of mannequin parameters and greater than 80% of complete FLOPs in bigger fashions. A workforce researchers from Sakana AI and NVIDIA have labored on a brand new analysis that immediately targets this bottleneck — not by altering the structure, however by making the computation inside feedforward layers considerably cheaper by unstructured sparsity.

Sparsity Exists, However GPUs Ignore It

Inside a transformer’s feedforward block, for any given enter token, solely a small fraction of hidden neurons really fireplace — the remaining produce zero after passing by the activation perform. That is referred to as activation sparsity, and prior work has documented this phenomenon in fashions with ReLU activations.

The irritating actuality is that this theoretical financial savings hardly ever interprets into precise speedups. NVIDIA GPUs are closely optimized for dense matrix multiplications utilizing Tensor Cores, which function on giant contiguous tiles of knowledge. Conventional sparse codecs like ELLPACK (ELL) require a separate kernel go to transform activations from dense to sparse illustration, and that conversion overhead typically cancels out what’s saved by skipping the zeros.

Critically, prior work on sparse LLM kernels (together with TurboSparse, ProSparse, and Q-Sparse) has targeted on memory-bound GEMV operations — the single- or few-token inference regime. The analysis workforce as a substitute targets compute-bound GEMM operations within the batched setting with hundreds of enter tokens, the place dense baselines on fashionable units can execute orders-of-magnitude greater FLOP/s with giant tiles and Tensor Cores. That may be a basically tougher downside, and the explanation prior approaches didn’t generalize to batched coaching or high-throughput inference.

01 — The Drawback

Feedforward layers dominate LLM price — and most of that work is wasted.

> ⅔

of all mannequin parameters dwell in feedforward layers

80%+

of complete FLOPs consumed by feedforward layers

99%+

of hidden activations may be zero with no accuracy drop

For any given token, solely a tiny fraction of hidden neurons really fireplace. The remaining output zero after the activation perform. That is referred to as activation sparsity — and it has traditionally been not possible to use on fashionable GPUs as a result of sparse operations ran slower than dense ones.

Prior sparse LLM kernels (TurboSparse, ProSparse, Q-Sparse) solely focused single-token GEMV operations. Sakana AI and NVIDIA sort out the tougher downside: batched GEMM with hundreds of tokens — the regime that covers each coaching and high-throughput inference.

02 — The Innovation

TwELL: a sparse format constructed round how GPU kernels really work.

Outdated Method — ELL

Row-wide packing, pricey to construct

Normal ELLPACK packs non-zeros row-by-row throughout your entire matrix. To assemble it from a tiled matmul output you want a separate kernel launch, a full world reminiscence learn, and synchronization throughout all CTAs. These overheads cancel out the financial savings from skipping zeros.

New Method — TwELL

Tile-wise packing, constructed within the epilogue

TwELL partitions columns into horizontal tiles matching the matmul kernel’s tile dimension T_n. Non-zeros are packed domestically inside every tile. By matching dimensions, TwELL is constructed inside the present gate projection kernel epilogue — no further kernel, no further reminiscence learn, no synchronization overhead.

The inference pipeline makes use of one fused kernel that reads gate activations in TwELL format and performs up + down projections collectively. The intermediate hidden state isn’t written to world reminiscence, chopping DRAM site visitors at each ahead go.

For coaching, a hybrid sparse format dynamically routes rows right into a compact ELL matrix (sparse rows) or a dense backup (overflow rows). Sparsity throughout coaching is very non-uniform — max non-zeros per row may be orders of magnitude above the typical — so the hybrid design handles this with out changing into brittle.

03 — Coaching Recipe

Two adjustments to your coaching config. Nothing else.

01

Substitute SiLU with ReLU because the gate activation perform. ReLU produces actual zeros for unfavourable inputs — that is what allows unstructured sparsity. No different architectural change is required. (Unregularized ReLU sits barely under SiLU on process accuracy: 46.4% vs 47.1% on the 1.5B mannequin, offset by the effectivity beneficial properties.)

02

Add an L1 loss time period on the hidden feedforward activations, averaged over all tokens and hidden dimensions throughout all layers. Really helpful coefficient: L1 = 2×10⁻⁵. Add it to your normal cross-entropy loss. No adjustments to studying fee, weight decay, batch dimension, or optimizer.

03

Sparsity stabilizes quick. The non-zero depend settles inside ~1,000 coaching steps (~1B tokens). The coaching kernels ship reminiscence and throughput advantages for nearly your entire coaching run, not simply towards the tip.

Watch Out

At L1 = 2×10⁻⁵, over 30% of neurons turn out to be completely inactive (useless neurons) on common throughout layers. Downstream accuracy shouldn’t be visibly affected at this degree. The paper explores focused gate weight reinitialization as a mitigation — yielding +19.1% speedup vs +17.9% baseline with no accuracy price.

04 — Benchmark Outcomes

Accuracy preserved. Effectivity scales up with mannequin dimension.

Mannequin

Accuracy

Inference

Power / tok

Coaching

Peak Mem

0.5B

40.4% → 40.4%

+17.0%

−11.8%

−1.5%

−19.2%

1B

44.6% → 44.7%

+18.1%

−14.6%

+7.1%

−25.5%

1.5B

46.4% → 46.2%

+18.8%

−15.0%

+11.6%

−28.1%

2B

49.1% → 48.8%

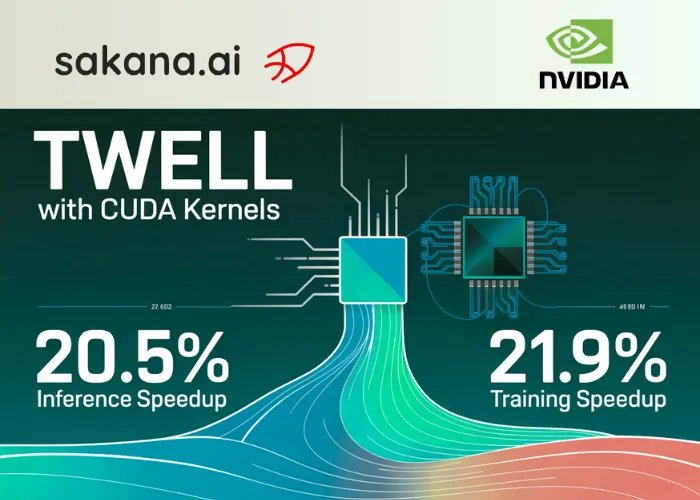

+20.5%

−17.0%

+21.9%

+22.3% *

All outcomes at L1 = 2×10⁻⁵ on a single node of eight H100 PCIe GPUs, sequence size 2048. Effectivity beneficial properties develop with scale — common non-zero activations drop from 39 (0.5B) to 24 (2B), giving the sparse kernels proportionally extra computation to skip. * The 2B sparse mannequin makes use of a bigger micro-batch enabled by lowered activation reminiscence, elevating peak utilization whereas enhancing throughput.

05 — Key Findings

What the paper reveals about the place sparsity really lives.

◆

Early layers are least lively. In a 28-layer 1.5B mannequin, the primary two layers have the fewest non-zero activations. Exercise peaks within the early-to-middle layers — per prior work exhibiting LLM reasoning and data retrieval focus there.

◆

First tokens in a sequence fireplace way more neurons. The mannequin allocates exponentially extra computation to early sequence positions the place contextual cues from prior tokens are absent. This non-uniformity is precisely what the sparse kernels exploit for speedups.

◆

Robust inverse correlation between sparsity and speedup. The paper measures a Pearson correlation of −0.996 between every layer’s common non-zero depend and its inference speedup contribution. Sparser layers ship proportionally bigger beneficial properties.

◆

Bigger beneficial properties on much less specialised {hardware}. On NVIDIA RTX PRO 6000 (188 SMs vs 114 on H100), coaching speedups are considerably greater. Dense GEMM is slower on the RTX 6000, whereas sparse ops run quicker — widening the relative benefit of sparsity on accessible {hardware}.

06 — Get Began

Open-source. All kernels and coaching code launched.

■

Structure: Works with gated feedforward LLMs — Llama, Qwen, and any Transformer++ design. Non-gated (authentic transformer) variant additionally supported: 11.2% inference speedup vs 17.9% for gated on the similar L1.

■

{Hardware}: CUDA kernels written for H100 GPUs utilizing TMA-based pipelining and protracted cooperative design. Features verified on RTX PRO 6000 with even bigger speedups.

■

Current fashions: Positive-tuning through sparsification approaches is flagged as a future path for bringing these kernels to pretrained dense fashions — not but demonstrated on this paper.

So, What Precisely is Proposed

The analysis workforce addresses this mismatch with two main contributions: a brand new sparse knowledge format referred to as TwELL (Tile-wise ELLPACK), and a set of customized CUDA kernels for inference and coaching constructed round it.

TwELL is designed round one key perception: fashionable matmul kernels already divide computation throughout small 2D tiles (of dimension T_m × T_n) assigned to particular person cooperative thread arrays (CTAs). Normal ELL packs non-zeros row-by-row throughout your entire matrix, which requires world synchronization to assemble from tiled matmul outputs. TwELL as a substitute partitions the columns of the gate activation matrix into horizontal tiles of dimension T, and inside every tile shops non-zero values and their indices in a neighborhood ELL-style format. By matching the tile dimension T to the column tile dimension T_n of the matmul kernel, TwELL may be produced immediately within the epilogue of the gate projection kernel — no further kernel launch, no extra world reminiscence learn, no synchronization throughout CTAs. The format makes use of a compression issue C such that T/C exceeds the utmost non-zeros per tile, and packages values, indices, and non-zero counts right into a single 32-bit matrix for locality.

https://pub.sakana.ai/sparser-faster-llms/

For inference, a single fused kernel takes the gate activations in TwELL format and performs the up and down projections collectively. Every CTA handles one row of inputs, iterating first statically over column tiles after which dynamically over every tile’s non-zero depend. For every lively neuron at index n, the CTA masses the n-th column of the up projection weight matrix W_u and the n-th row of the down projection weight matrix W_d, computes the dot product, and accumulates into the output. The intermediate hidden state h_u isn’t materialized in world reminiscence, chopping DRAM site visitors considerably.

For coaching, the state of affairs is extra complicated as a result of sparsity patterns are extremely non-uniform throughout tokens and layers — the utmost non-zeros per row may be orders of magnitude above the typical, making a pure ELL format brittle. The analysis workforce introduces a hybrid sparse format that dynamically routes rows both right into a compact ELL matrix (for rows under a non-zero threshold) or right into a dense backup matrix (for overflow rows). This enables environment friendly sparse gradient computation within the backward go with out requiring dense-to-dense matmuls for many rows. The workforce additionally releases kernels for the unique non-gated transformer feedforward block; on the advisable sparsity degree, the non-gated variant achieves an 11.2% inference speedup in comparison with 17.9% for the gated design.

Simply ReLU and L1 Regularization

The sparsity induction technique is intentionally minimal. The analysis workforce used ReLU because the gate activation perform and add a easy L1 loss time period on the hidden feedforward activations, managed by a coefficient L1. No different architectural adjustments are required, and the analysis workforce reported that including L1 regularization didn’t have an effect on different hyperparameters (studying fee, weight decay, optimizer settings).

Fashions have been skilled on the fineweb dataset (a deduplicated fineweb-edu break up) at chinchilla-optimal token counts — roughly 10B tokens for a 0.5B mannequin as much as 40B tokens for a 2B mannequin — with a context size of 2048 and a batch dimension of 1M tokens.

Testing eight L1 coefficient values on a 1.5B parameter mannequin, they discover that as much as L1 = 3 × 10−5, there may be basically no drop in imply process accuracy throughout seven downstream benchmarks (ARC Straightforward/Problem, HellaSwag, OpenBookQA, PIQA, WinoGrande, CommonsenseQA), with ultimate cross-entropy growing by lower than 2% relative to the unregularized baseline. The advisable setting L1 = 2 × 10−5 reduces common non-zero activations from 911 per layer (within the unregularized 1.5B mannequin with a feedforward hidden dimension of 5632) down to only 29 — roughly 99.5% sparsity — with no measurable downstream efficiency loss.

One essential key level: at L1 = 2 × 10−5, over 30% of neurons turn out to be completely inactive (useless neurons) on common throughout layers. The analysis workforce explores two mitigation methods — scheduling the L1 warmup and making use of focused reinitialization to useless gate projection columns — and finds that the reinitialization strategy maintains comparable sparsity ranges whereas barely enhancing each downstream accuracy and effectivity (+19.1% inference speedup vs. +17.9% baseline). That is listed as a path for future work.

Measured Effectivity Features

The effectivity outcomes are reported on a single node of eight H100 PCIe GPUs, with a hard and fast sequence size of 2048 tokens. For the cross-scale comparability, the L1 coefficient is fastened at 2 × 10−5.

At smaller scales, sparsity delivers clear peak reminiscence reductions throughout coaching:

ModelDense Peak MemorySparse Peak MemoryChange0.5B26.2 GB21.2 GB−19.2percent1B44.5 GB33.1 GB−25.5percent1.5B62.8 GB45.1 GB−28.1%

At 2B parameters, the sparse mannequin makes use of a bigger micro-batch (enabled by lowered activation reminiscence at that scale), which ends up in greater peak GPU reminiscence (46.7 → 57.1 GB) however quicker coaching throughput (+21.9%). The effectivity beneficial properties on all metrics for the 2B mannequin:

- Ahead execution throughput: 87.8 → 106 enter tokens/ms (+20.5%)

- Power per token: 7.85 → 6.51 mJ (−17.0%)

- Coaching step throughput: 22.4 → 27.3 enter tokens/ms (+21.9%)

Throughout the complete 0.5B–2B vary, imply process accuracy of sparse and non-sparse fashions stays statistically indistinguishable. Effectivity advantages develop with mannequin scale: bigger fashions naturally develop decrease common non-zero counts (dropping from 39 at 0.5B to 24 at 2B), which suggests the sparse kernels skip a proportionally better share of computation.

Coaching speedups are additionally noticed on NVIDIA’s RTX PRO 6000 GPU, the place the bigger Streaming Multiprocessor depend (188 vs. 114 on H100) permits sparse operations to run quicker — suggesting these beneficial properties prolong to much less specialised {hardware}.

What the Sparsity Patterns Reveal

Sparsity shouldn’t be uniform: the primary two layers of a 28-layer 1.5B mannequin are the least lively, adopted by a pronounced peak in non-zero activations throughout early-middle layers — per prior work suggesting that is the place a lot of LLM reasoning and data retrieval happens. Individually, the primary tokens in an enter sequence activate way more neurons than later tokens, with an exponential lower thereafter. The analysis workforce noticed an inverse Pearson correlation of −0.996 between every layer’s common non-zero depend and its inference speedup contribution, confirming that the sparsest layers present the best per-layer beneficial properties.

Take a look at the Paper, Repo and Technical particulars. Additionally, be happy to comply with us on Twitter and don’t neglect to hitch our 150k+ ML SubReddit and Subscribe to our E-newsletter. Wait! are you on telegram? now you possibly can be part of us on telegram as nicely.

Have to companion with us for selling your GitHub Repo OR Hugging Face Web page OR Product Launch OR Webinar and so forth.? Join with us